DeepSeek AI released DeepSeek-OCR 2, an open source document OCR and understanding system that restructures its vision encoder to read pages in a causal order that is closer to how humans scan complex documents. The key component is DeepEncoder V2, a language model style transformer that converts a 2D page into a 1D sequence of visual tokens that already follow a learned reading flow before text decoding starts. https://github.com/deepseek-ai/DeepSeek-OCR-2 From raster order to causal visual flow Most multimodal models still flatten images into a fixed raster sequence, top left to bottom right, and apply a transformer with static positional encodings.…

-

-

We implement an advanced, end-to-end Kornia tutorial and demonstrate how modern, differentiable computer vision can be built entirely in PyTorch. We start by constructing GPU-accelerated, synchronized augmentation pipelines for images, masks, and keypoints, then move into differentiable geometry by optimizing a homography directly through gradient descent. We also show how learned feature matching with LoFTR integrates with Kornia’s RANSAC to estimate robust homographies and produce a simple stitched output, even under constrained or offline-safe conditions. Finally, we ground these ideas in practice by training a lightweight CNN on CIFAR-10 using Kornia’s GPU augmentations, highlighting how research-grade vision pipelines translate naturally…

-

How do you build a single vision language action model that can control many different dual arm robots in the real world? LingBot-VLA is Ant Group Robbyant’s new Vision Language Action foundation model that targets practical robot manipulation in the real world. It is trained on about 20,000 hours of teleoperated bimanual data collected from 9 dual arm robot embodiments and is evaluated on the large scale GM-100 benchmark across 3 platforms. The model is designed for cross morphology generalization, data efficient post training, and high training throughput on commodity GPU clusters. https://arxiv.org/pdf/2601.18692 Large scale dual arm dataset across 9 robot…

-

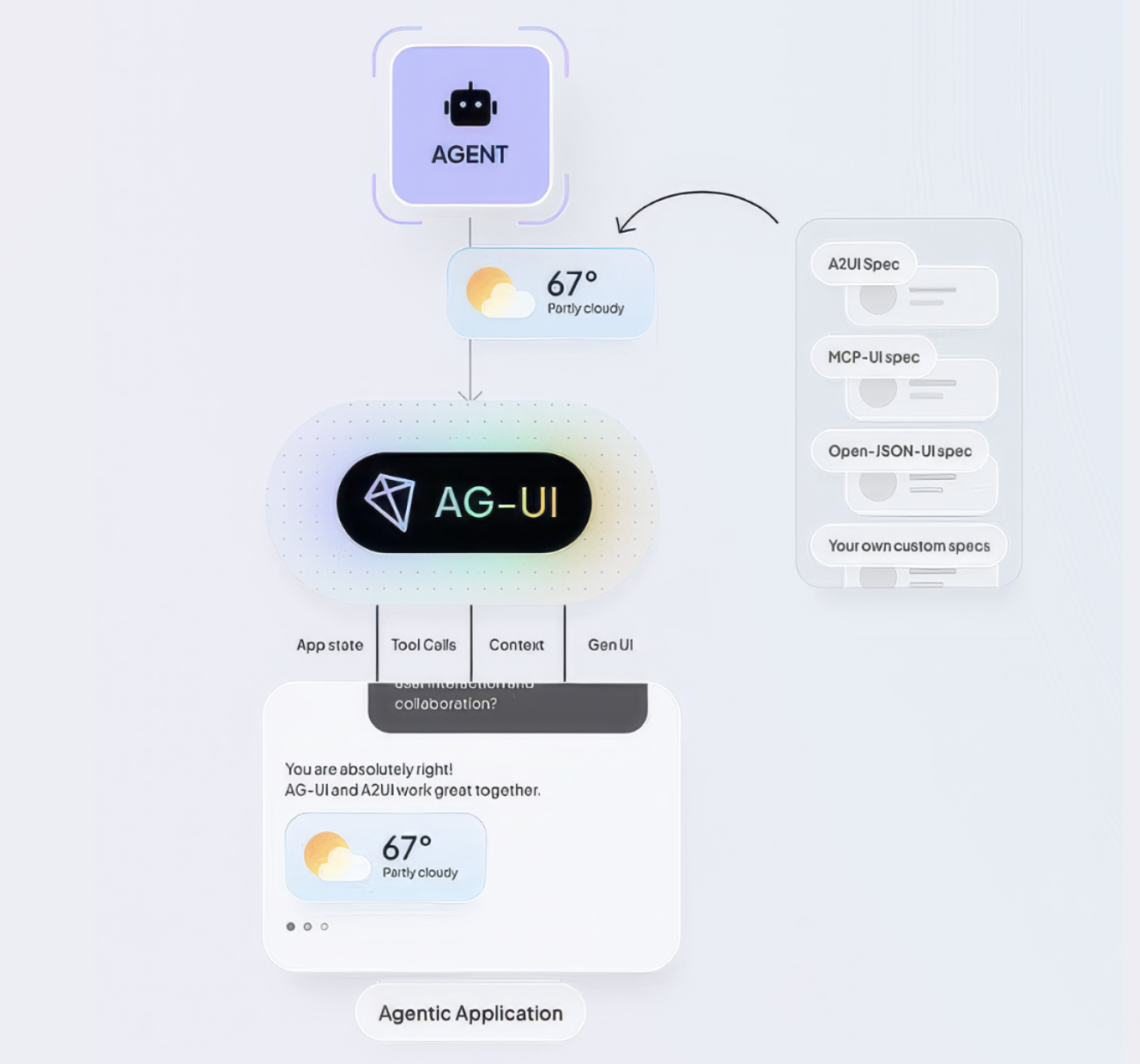

Most AI applications still showcase the model as a chat box. That interface is simple, but it hides what agents are actually doing, such as planning steps, calling tools, and updating state. Generative UI is about letting the agent drive real interface elements, for example tables, charts, forms, and progress indicators, so the experience feels like a product, not a log of tokens. https://www.copilotkit.ai/blog/the-state-of-agentic-ui-comparing-ag-ui-mcp-ui-and-a2ui-protocols What is Generative UI? The CopilotKit team explains Generative UI as to any user interface that is partially or fully produced by an AI agent. Instead of only returning text, the agent can drive: stateful components…

-

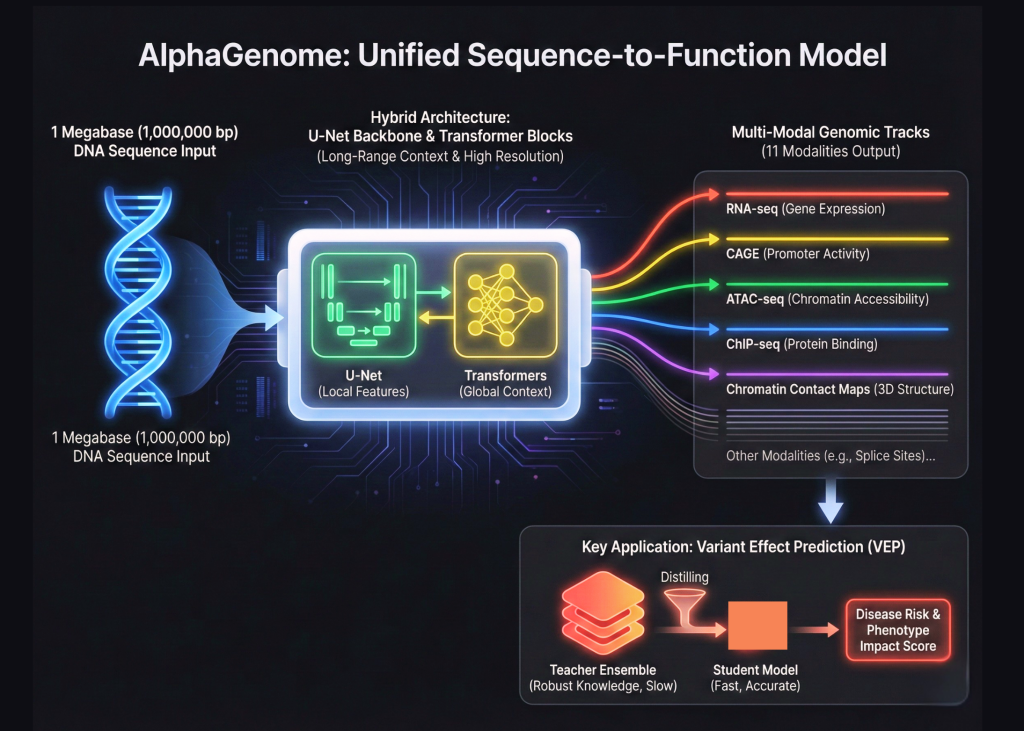

Google DeepMind is expanding its biological toolkit beyond the world of protein folding. After the success of AlphaFold, the Google’s research team has introduced AlphaGenome. This is a unified deep learning model designed for sequence to function genomics. This represents a major shift in how we model the human genome. AlphaGenome does not treat DNA as simple text. Instead, it processes 1,000,000 base pair windows of raw DNA to predict the functional state of a cell. Bridging the Scale Gap with Hybrid Architectures The complexity of the human genome comes from its scale. Most existing models struggle to see the…

-

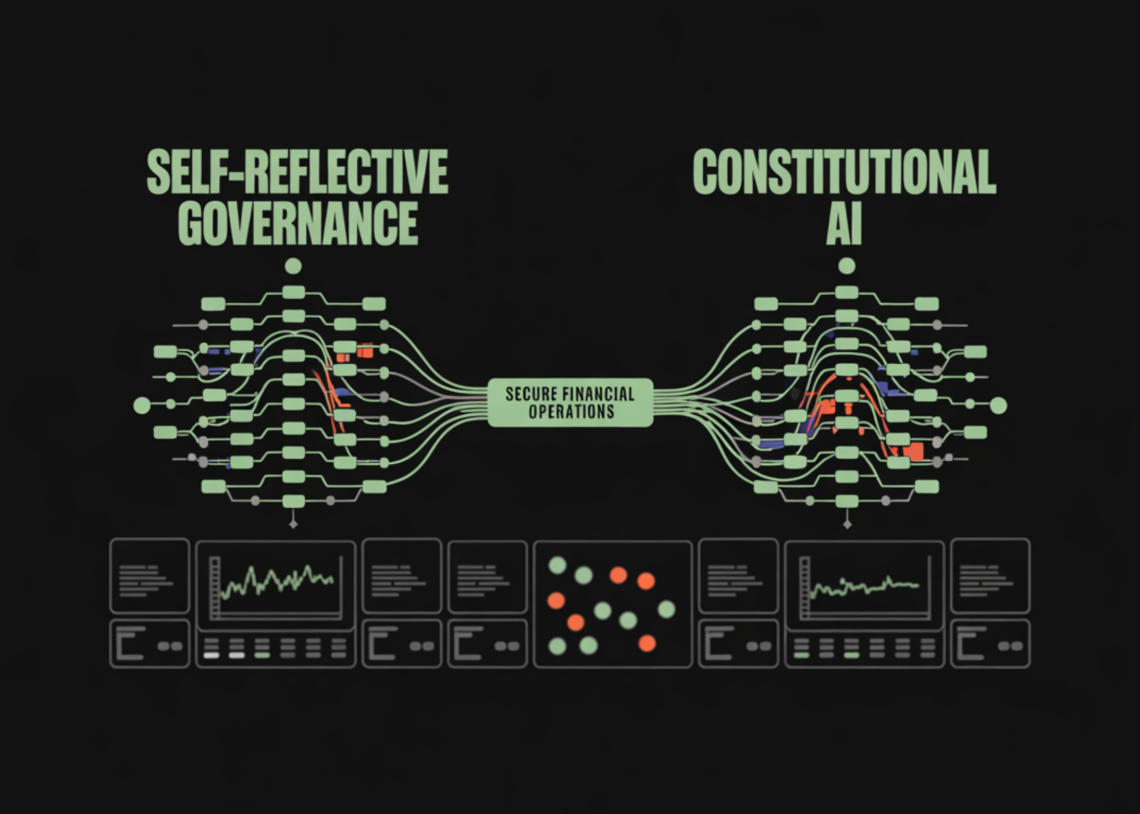

In this tutorial, we implement a dual-agent governance system that applies Constitutional AI principles to financial operations. We demonstrate how we separate execution and oversight by pairing a Worker Agent that performs financial actions with an Auditor Agent that enforces policy, safety, and compliance. By encoding governance rules directly into a formal constitution and combining rule-based checks with AI-assisted reasoning, we can build systems that are self-reflective, auditable, and resilient to risky or non-compliant behavior in high-stakes financial workflows. Check out the FULL CODES here. !pip install -q pydantic anthropic python-dotenv import json import re from typing import List, Dict, Any,…

-

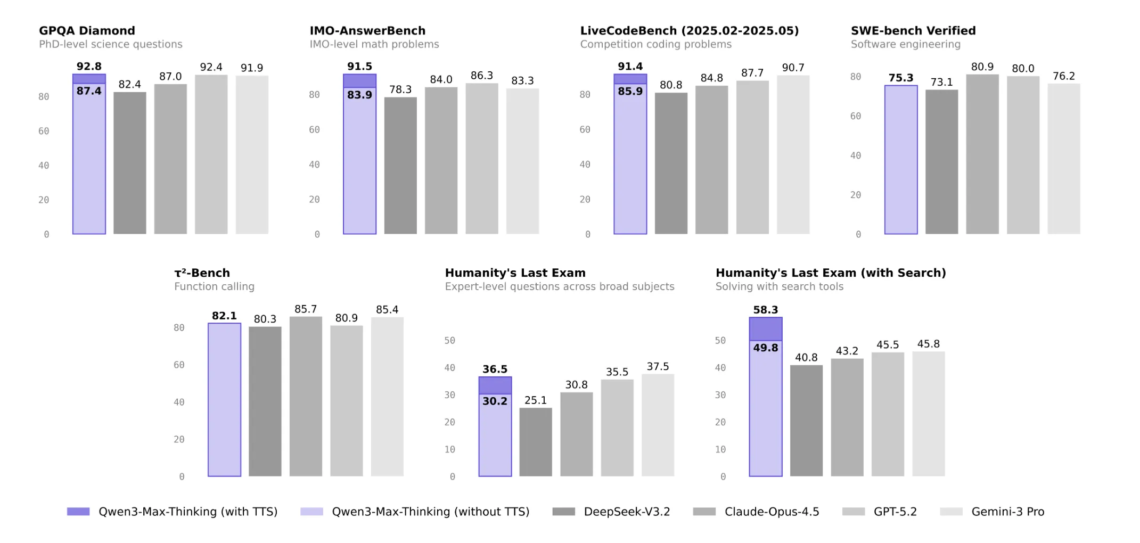

Qwen3-Max-Thinking is Alibaba’s new flagship reasoning model. It does not only scale parameters, it also changes how inference is done, with explicit control over thinking depth and built in tools for search, memory, and code execution. https://qwen.ai/blog?id=qwen3-max-thinking Model scale, data, and deployment Qwen3-Max-Thinking is a trillion-parameter MoE flagship LLM pretrained on 36T tokens and built on the Qwen3 family as the top tier reasoning model. The model targets long horizon reasoning and code, not only casual chat. It runs with a context window of 260k tokens, which supports repository scale code, long technical reports, and multi document analysis within a…

-

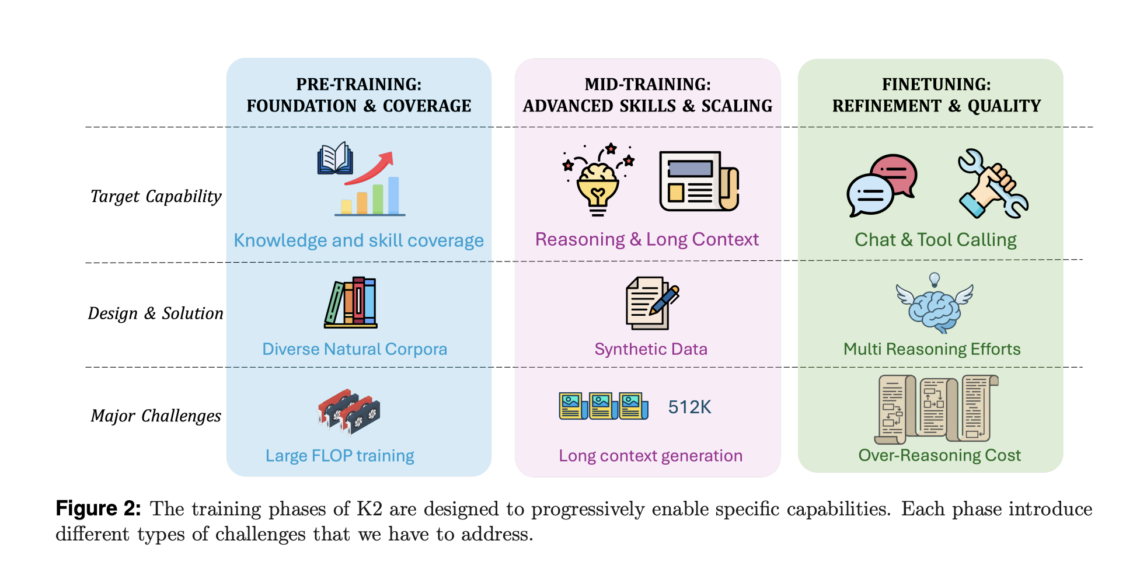

Can a fully sovereign open reasoning model match state of the art systems when every part of its training pipeline is transparent. Researchers from Mohamed bin Zayed University of Artificial Intelligence (MBZUAI) release K2 Think V2, a fully sovereign reasoning model designed to test how far open and fully documented pipelines can push long horizon reasoning on math, code, and science when the entire stack is open and reproducible. K2 Think V2 takes the 70 billion parameter K2 V2 Instruct base model and applies a carefully engineered reinforcement learning approach to turn it into a high precision reasoning model that…

-

Tencent Hunyuan has open sourced HPC-Ops, a production grade operator library for large language model inference architecture devices. HPC-Ops focuses on low level CUDA kernels for core operators such as Attention, Grouped GEMM, and Fused MoE, and exposes them through a compact-C and Python API for integration into existing inference stacks. HPC-Ops runs in large scale internal services. In those deployments it delivers about 30 percent queries per minute improvement for Tencent-HY models and about 17 percent improvement for DeepSeek models on mainstream inference cards. These gains are reported at the service level, so they reflect the cumulative effect of…

-

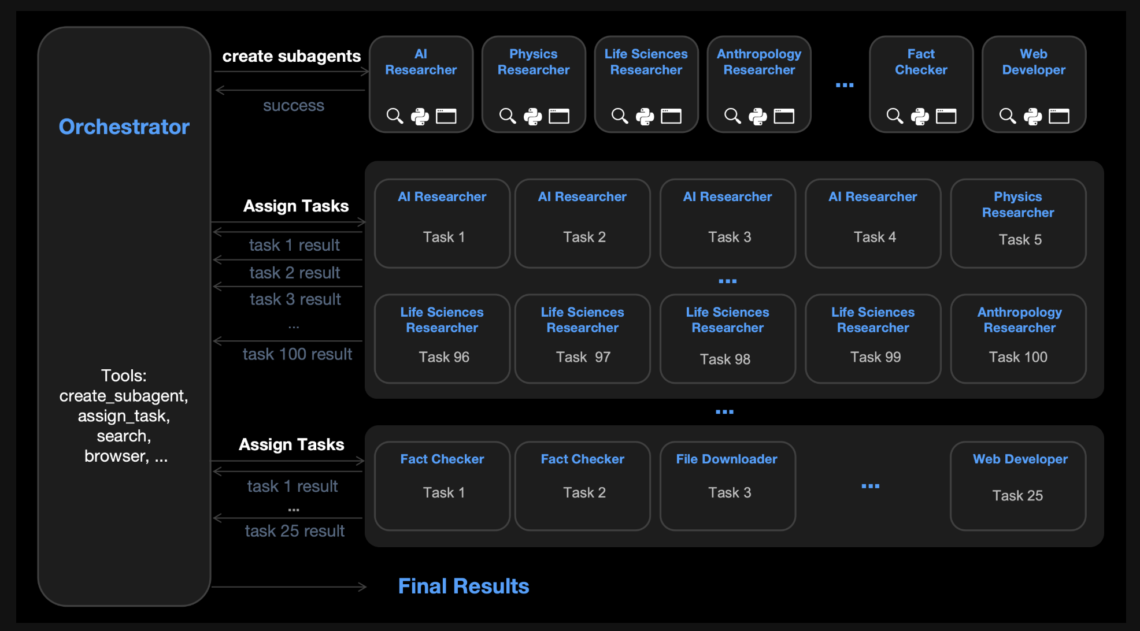

Moonshot AI has released Kimi K2.5 as an open source visual agentic intelligence model. It combines a large Mixture of Experts language backbone, a native vision encoder, and a parallel multi agent system called Agent Swarm. The model targets coding, multimodal reasoning, and deep web research with strong benchmark results on agentic, vision, and coding suites. Model Architecture and Training Kimi K2.5 is a Mixture of Experts model with 1T total parameters and about 32B activated parameters per token. The network has 61 layers. It uses 384 experts, with 8 experts selected per token plus 1 shared expert. The attention…