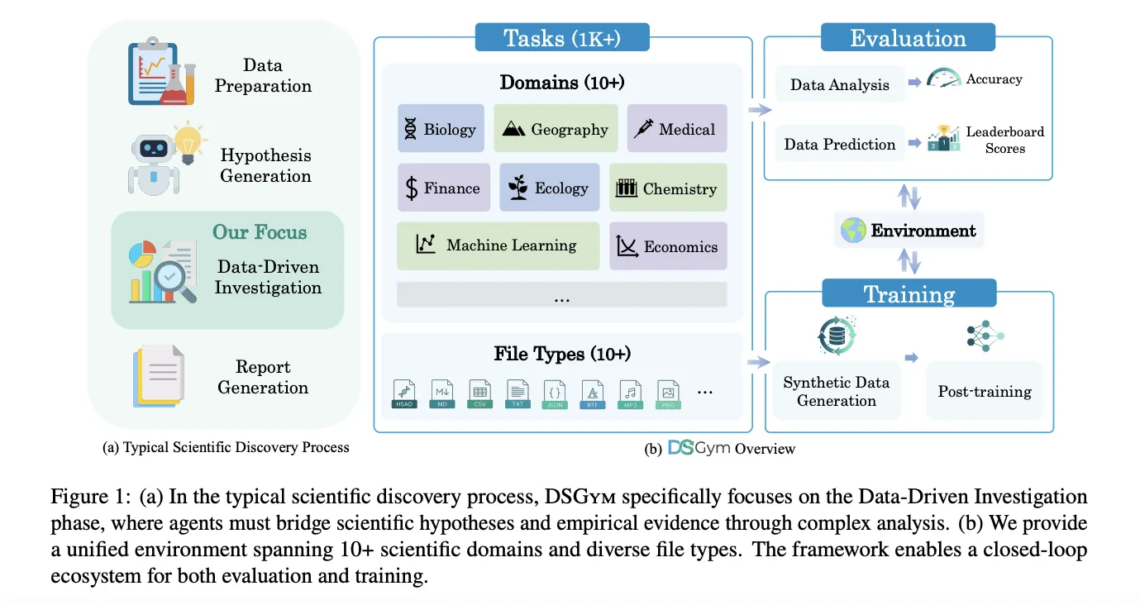

Data science agents should inspect datasets, design workflows, run code, and return verifiable answers, not just autocomplete Pandas code. DSGym, introduced by researchers from Stanford University, Together AI, Duke University, and Harvard University, is a framework that evaluates and trains such agents across more than 1,000 data science challenges with expert curated ground truth and a consistent post training pipeline. https://arxiv.org/pdf/2601.16344 Why existing benchmarks fall short? The research team first probe existing benchmarks that claim to test data aware agents. When data files are hidden, models still retain high accuracy. On QRData the average drop is 40.5 percent, on DAEval…

-

-

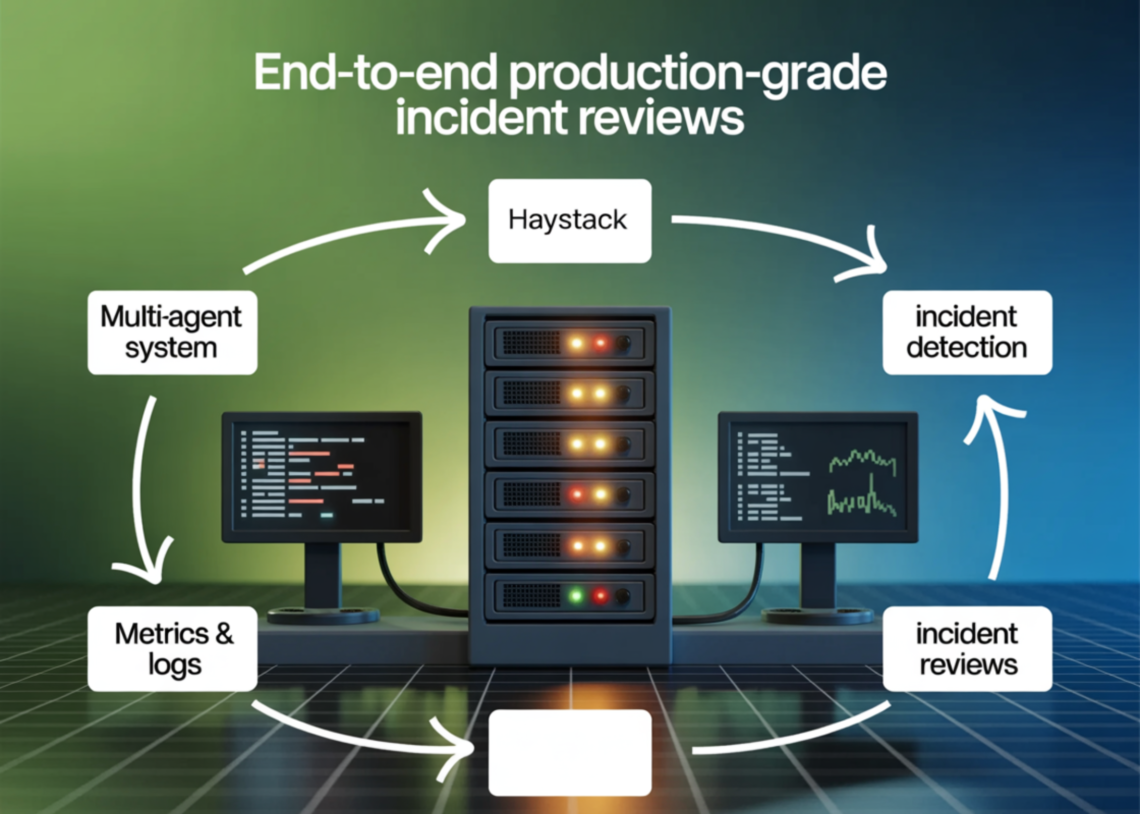

@tool def sql_investigate(query: str) -> dict: try: df = con.execute(query).df() head = df.head(30) return { "rows": int(len(df)), "columns": list(df.columns), "preview": head.to_dict(orient="records") } except Exception as e: return {"error": str(e)} @tool def log_pattern_scan(window_start_iso: str, window_end_iso: str, top_k: int = 8) -> dict: ws = pd.to_datetime(window_start_iso) we = pd.to_datetime(window_end_iso) df = logs_df[(logs_df["ts"] >= ws) & (logs_df["ts"] <= we)].copy() if df.empty: return {"rows": 0, "top_error_kinds": [], "top_services": [], "top_endpoints": []} df["error_kind_norm"] = df["error_kind"].fillna("").replace("", "NONE") err = df[df["level"].isin(["WARN","ERROR"])].copy() top_err = err["error_kind_norm"].value_counts().head(int(top_k)).to_dict() top_svc = err["service"].value_counts().head(int(top_k)).to_dict() top_ep = err["endpoint"].value_counts().head(int(top_k)).to_dict() by_region = err.groupby("region").size().sort_values(ascending=False).head(int(top_k)).to_dict() p95_latency = float(np.percentile(df["latency_ms"].values, 95)) return { "rows": int(len(df)), "warn_error_rows": int(len(err)), "p95_latency_ms": p95_latency, "top_error_kinds": top_err,…

-

For decades, predicting the weather has been the exclusive domain of massive government supercomputers running complex physics-based equations. NVIDIA has shattered that barrier with the release of the Earth-2 family of open models and tools for AI weather and climate prediction accessible to virtually anyone, from tech startups to national meteorological agencies. In a move that democratizes climate science, NVIDIA unveiled 3 groundbreaking new models powered by novel architectures: Atlas, StormScope, and HealDA. These tools promise to accelerate forecasting speeds by orders of magnitude while delivering accuracy that rivals or exceeds traditional methods. The Democratization of Weather Intelligence Historically, running…

-

Clawdbot is an open source personal AI assistant that you run on your own hardware. It connects large language models from providers such as Anthropic and OpenAI to real tools such as messaging apps, files, shell, browser and smart home devices, while keeping the orchestration layer under your control. The interesting part is not that Clawdbot chats. It is that the project ships a concrete architecture for local first agents, and a typed workflow engine called Lobster that turns model calls into deterministic pipelines. Architecture: Gateway, Nodes and Skills At the center of Clawdbot is the Gateway process. The Gateway…

-

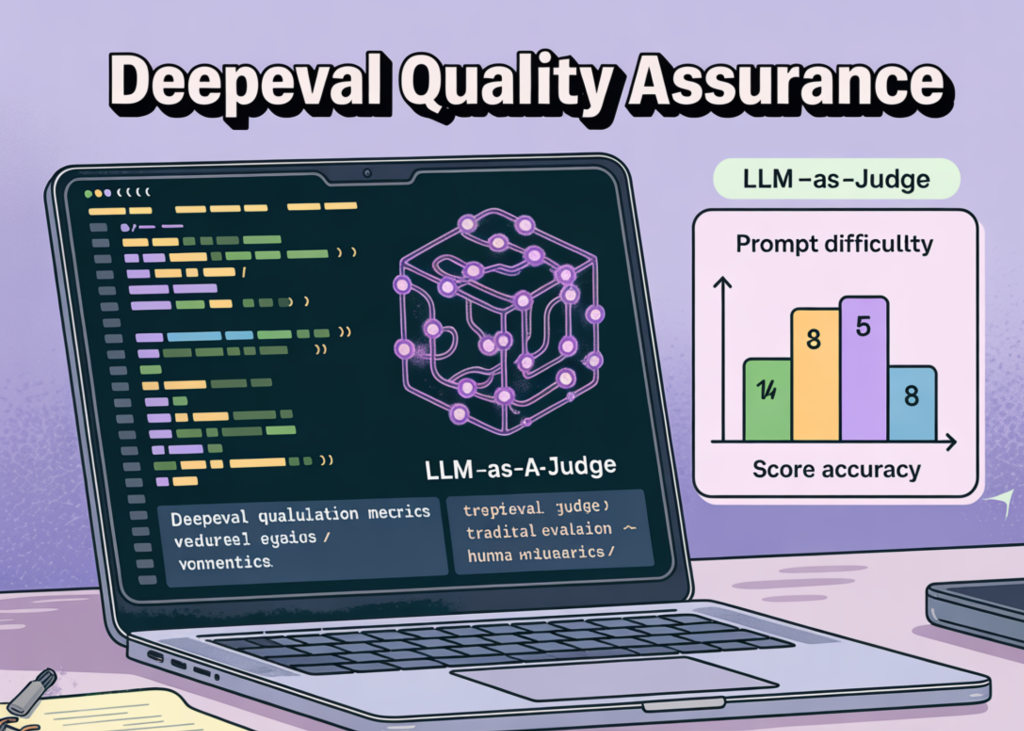

We initiate this tutorial by configuring a high-performance evaluation environment, specifically focused on integrating the DeepEval framework to bring unit-testing rigor to our LLM applications. By bridging the gap between raw retrieval and final generation, we implement a system that treats model outputs as testable code and uses LLM-as-a-judge metrics to quantify performance. We move beyond manual inspection by building a structured pipeline in which every query, retrieved context, and generated response is validated against rigorous academic-standard metrics. Check out the FULL CODES here. import sys, os, textwrap, json, math, re from getpass import getpass print("🔧 Hardening environment (prevents common Colab/py3.12…

-

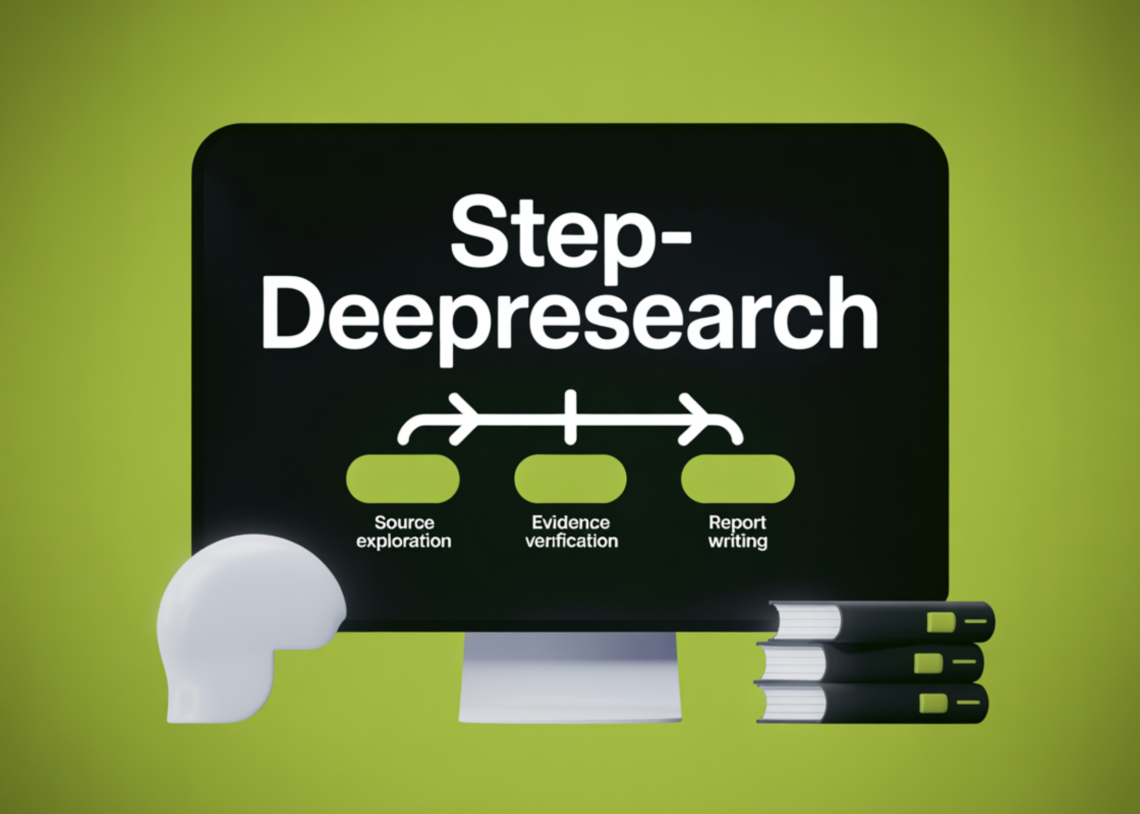

StepFun has introduced Step-DeepResearch, a 32B parameter end to end deep research agent that aims to turn web search into actual research workflows with long horizon reasoning, tool use and structured reporting. The model is built on Qwen2.5 32B-Base and is trained to act as a single agent that plans, explores sources, verifies evidence and writes reports with citations, while keeping inference cost low. From Search to Deep Research Most existing web agents are tuned for multi-hop question-answering benchmarks. They try to match ground truth answers for short questions. This is closer to targeted retrieval than to real research. Deep…

-

def visualize_results(df, priority_scores, feature_importance): fig, axes = plt.subplots(2, 3, figsize=(18, 10)) fig.suptitle('Vulnerability Scanner - ML Analysis Dashboard', fontsize=16, fontweight="bold") axes[0, 0].hist(priority_scores, bins=30, color="crimson", alpha=0.7, edgecolor="black") axes[0, 0].set_xlabel('Priority Score') axes[0, 0].set_ylabel('Frequency') axes[0, 0].set_title('Priority Score Distribution') axes[0, 0].axvline(np.percentile(priority_scores, 75), color="orange", linestyle="--", label="75th percentile") axes[0, 0].legend() axes[0, 1].scatter(df['cvss_score'], priority_scores, alpha=0.6, c=priority_scores, cmap='RdYlGn_r', s=50) axes[0, 1].set_xlabel('CVSS Score') axes[0, 1].set_ylabel('ML Priority Score') axes[0, 1].set_title('CVSS vs ML Priority') axes[0, 1].plot([0, 10], [0, 1], 'k--', alpha=0.3) severity_counts = df['severity'].value_counts() colors = {'CRITICAL': 'darkred', 'HIGH': 'red', 'MEDIUM': 'orange', 'LOW': 'yellow'} axes[0, 2].bar(severity_counts.index, severity_counts.values, color=[colors.get(s, 'gray') for s in severity_counts.index]) axes[0, 2].set_xlabel('Severity') axes[0, 2].set_ylabel('Count') axes[0, 2].set_title('Severity Distribution') axes[0, 2].tick_params(axis="x",…

-

GitHub has opened up the internal agent runtime that powers GitHub Copilot CLI and exposed it as a programmable SDK. The GitHub Copilot-SDK, now in technical preview, lets you embed the same agentic execution loop into any application so the agent can plan, invoke tools, edit files, and run commands as part of your own workflows. What the GitHub Copilot SDK provides The GitHub Copilot-SDK is a multi platform SDK for integrating the GitHub Copilot Agent into applications and services. It gives programmatic access to the execution loop that already powers GitHub Copilot CLI. Instead of building your own planner…

-

In this tutorial, we build a cost-aware planning agent that deliberately balances output quality against real-world constraints such as token usage, latency, and tool-call budgets. We design the agent to generate multiple candidate actions, estimate their expected costs and benefits, and then select an execution plan that maximizes value while staying within strict budgets. With this, we demonstrate how agentic systems can move beyond “always use the LLM” behavior and instead reason explicitly about trade-offs, efficiency, and resource awareness, which is critical for deploying agents reliably in constrained environments. Check out the FULL CODES here. Copy CodeCopiedUse a different Browser import…

-

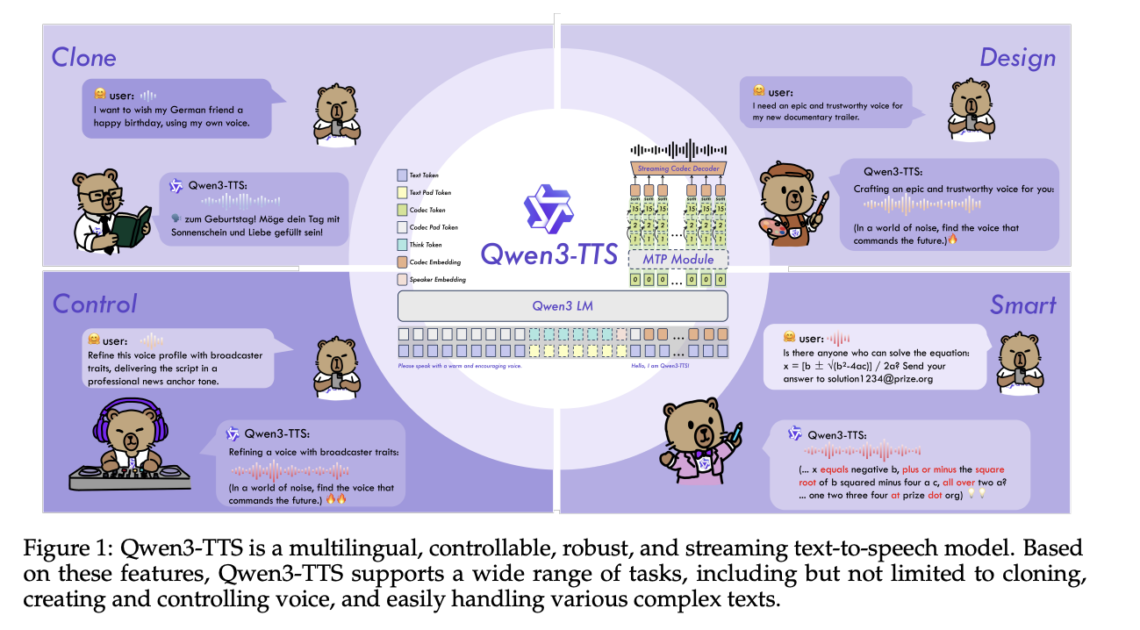

Alibaba Cloud’s Qwen team has open-sourced Qwen3-TTS, a family of multilingual text-to-speech models that target three core tasks in one stack, voice clone, voice design, and high quality speech generation. https://arxiv.org/pdf/2601.15621v1 Model family and capabilities Qwen3-TTS uses a 12Hz speech tokenizer and 2 language model sizes, 0.6B and 1.7B, packaged into 3 main tasks. The open release exposes 5 models, Qwen3-TTS-12Hz-0.6B-Base and Qwen3-TTS-12Hz-1.7B-Base for voice cloning and generic TTS, Qwen3-TTS-12Hz-0.6B-CustomVoice and Qwen3-TTS-12Hz-1.7B-CustomVoice for promptable preset speakers, and Qwen3-TTS-12Hz-1.7B-VoiceDesign for free form voice creation from natural language descriptions, along with the Qwen3-TTS-Tokenizer-12Hz codec. All models support 10 languages, Chinese, English, Japanese,…