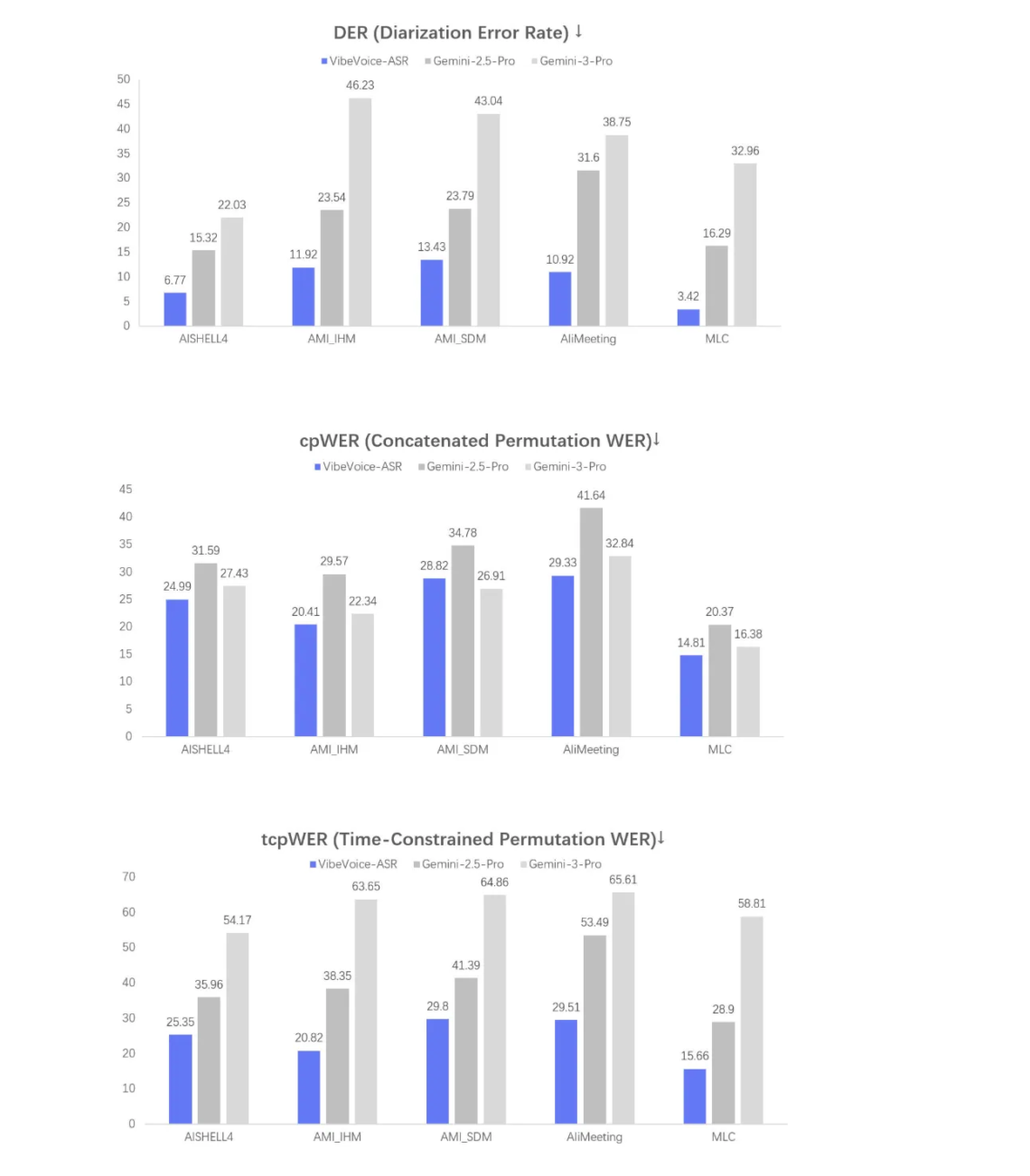

Microsoft has released VibeVoice-ASR as part of the VibeVoice family of open source frontier voice AI models. VibeVoice-ASR is described as a unified speech-to-text model that can handle 60-minute long-form audio in a single pass and output structured transcriptions that encode Who, When, and What, with support for Customized Hotwords. VibeVoice sits in a single repository that hosts Text-to-Speech, real time TTS, and Automatic Speech Recognition models under an MIT license. VibeVoice uses continuous speech tokenizers that run at 7.5 Hz and a next-token diffusion framework where a Large Language Model reasons over text and dialogue and a diffusion head…

-

-

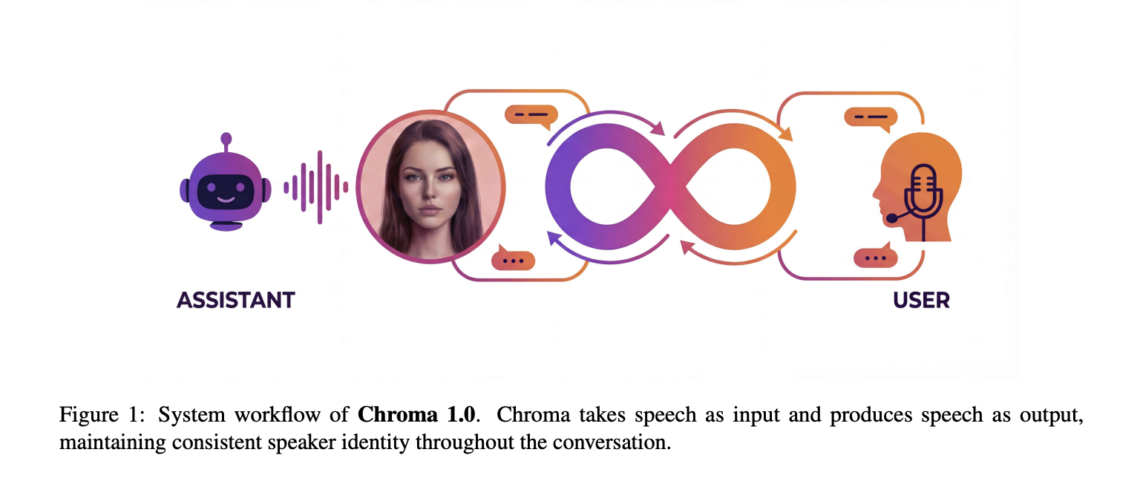

Chroma 1.0 is a real time speech to speech dialogue model that takes audio as input and returns audio as output while preserving the speaker identity across multi turn conversations. It is presented as the first open source end to end spoken dialogue system that combines low latency interaction with high fidelity personalized voice cloning from only a few seconds of reference audio. The model operates directly on discrete speech representations rather than on text transcripts. It targets the same use cases as commercial real time agents, but with a compact 4B parameter dialogue core and a design that treats…

-

Inworld AI has introduced Inworld TTS-1.5, an upgrade to its TTS-1 family that targets realtime voice agents with strict constraints on latency, quality, and cost. TTS-1.5 is described as the number top ranked text to speech system on Artificial Analysis and is designed to be more expressive and more stable than prior generations while remaining suitable for large scale consumer deployments. Realtime latency for interactive agents TTS-1.5 focuses on P90 time to first audio latency, which is a critical metric for user perceived responsiveness. For TTS-1.5 Max, P90 time to first audio is below 250 ms. For TTS-1.5 Mini, P90…

-

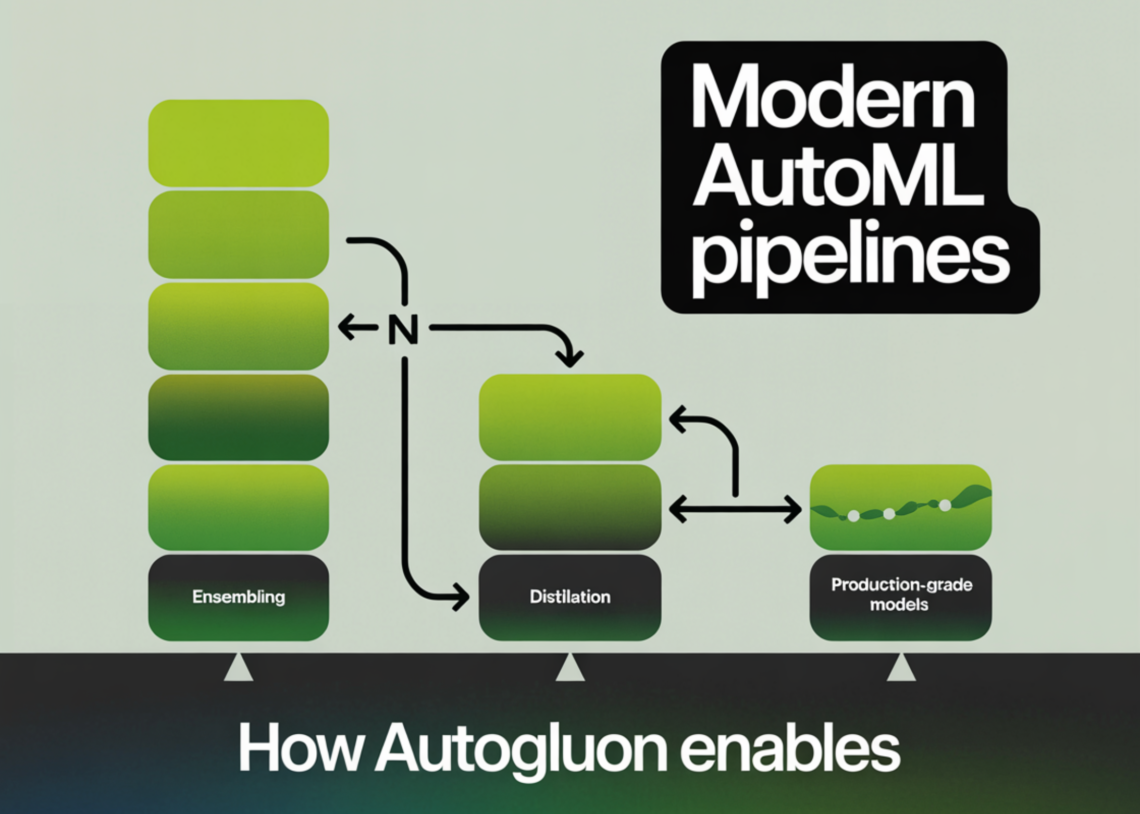

In this tutorial, we build a production-grade tabular machine learning pipeline using AutoGluon, taking a real-world mixed-type dataset from raw ingestion through to deployment-ready artifacts. We train high-quality stacked and bagged ensembles, evaluate performance with robust metrics, perform subgroup and feature-level analysis, and then optimize the model for real-time inference using refit-full and distillation. Throughout the workflow, we focus on practical decisions that balance accuracy, latency, and deployability. Check out the FULL CODES here. !pip -q install -U "autogluon==1.5.0" "scikit-learn>=1.3" "pandas>=2.0" "numpy>=1.24" import os, time, json, warnings warnings.filterwarnings("ignore") import numpy as np import pandas as pd from sklearn.model_selection import train_test_split from…

-

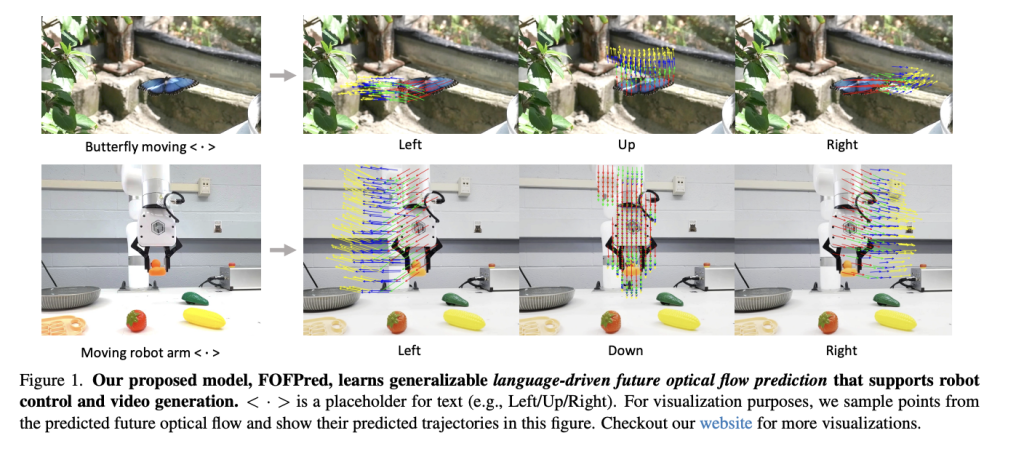

Salesforce AI research team present FOFPred, a language driven future optical flow prediction framework that connects large vision language models with diffusion transformers for dense motion forecasting in control and video generation settings. FOFPred takes one or more images and a natural language instruction such as ‘moving the bottle from right to left’ and predicts 4 future optical flow frames that describe how every pixel is expected to move over time. https://arxiv.org/pdf/2601.10781 Future optical flow as a motion representation Optical flow is the apparent per pixel displacement between two frames. FOFPred focuses on future optical flow, which means predicting dense…

-

In this tutorial, we demonstrate how a semi-centralized Anemoi-style multi-agent system works by letting two peer agents negotiate directly without a manager or supervisor. We show how a Drafter and a Critic iteratively refine an output through peer-to-peer feedback, reducing coordination overhead while preserving quality. We implement this pattern end-to-end in Colab using LangGraph, focusing on clarity, control flow, and practical execution rather than abstract orchestration theory. Check out the FULL CODES here. !pip -q install -U langgraph langchain-openai langchain-core import os import json from getpass import getpass from typing import TypedDict from langchain_openai import ChatOpenAI from langgraph.graph import StateGraph, END…

-

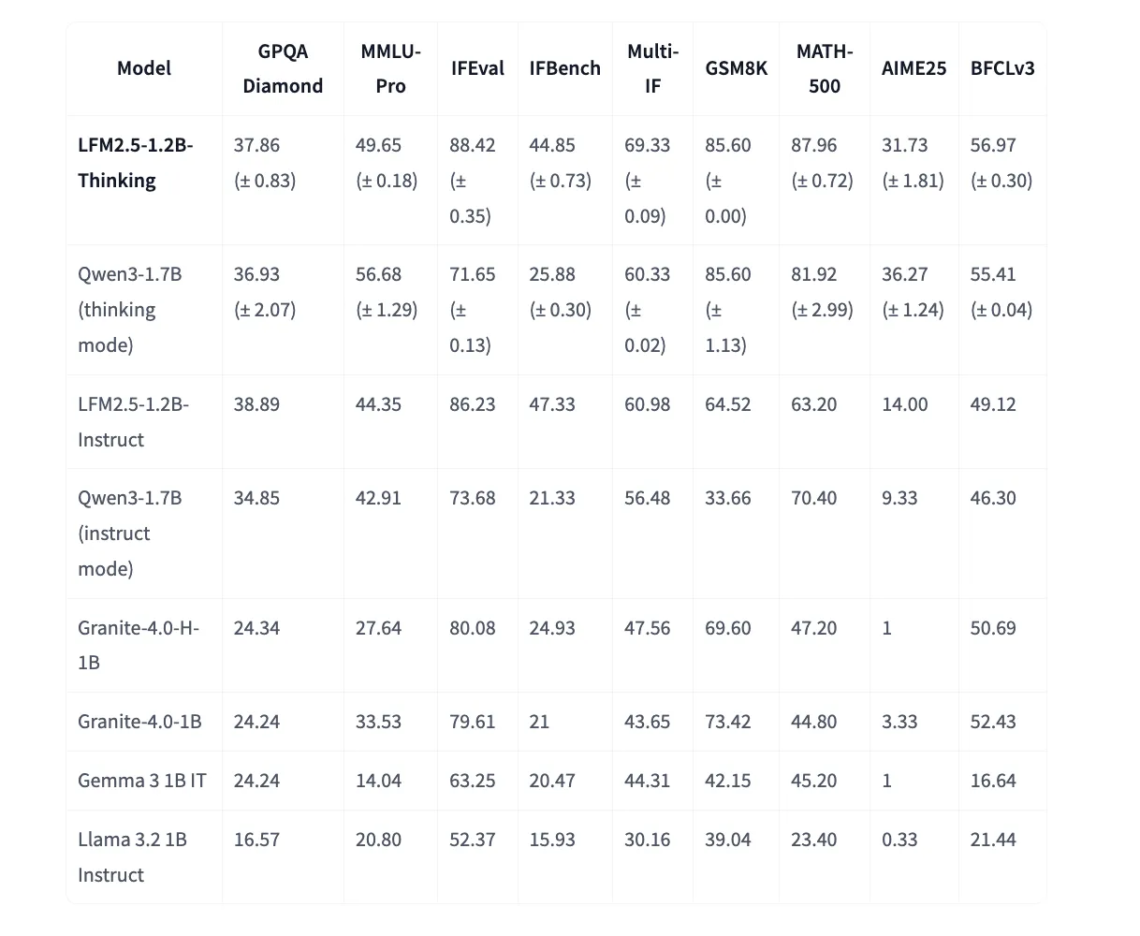

Liquid AI has released LFM2.5-1.2B-Thinking, a 1.2 billion parameter reasoning model that runs fully on device and fits in about 900 MB on a modern phone. What needed a data center 2 years ago can now run offline on consumer hardware, with a focus on structured reasoning traces, tool use, and math, rather than general chat. Position in the LFM2.5 family and core specs LFM2.5-1.2B-Thinking is part of the LFM2.5 family of Liquid Foundation Models, which extends the earlier LFM2 architecture with more pre-training and multi stage reinforcement learning for edge deployment. The model is text only and general purpose…

-

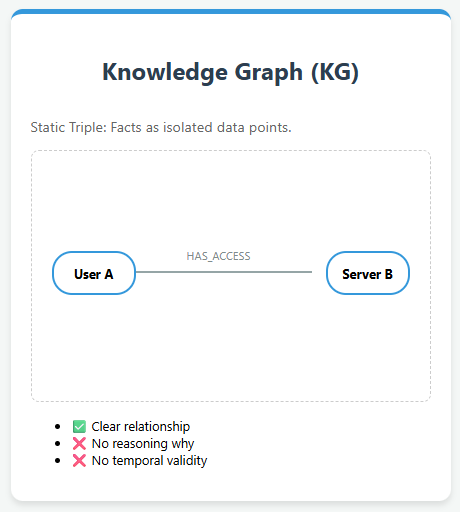

Knowledge Graphs and their limitations With the rapid growth of AI applications, Knowledge Graphs (KGs) have emerged as a foundational structure for representing knowledge in a machine-readable form. They organize information as triples—a head entity, a relation, and a tail entity—forming a graph-like structure where entities are nodes and relationships are edges. This representation allows machines to understand and reason over connected knowledge, supporting intelligent applications such as question answering, semantic analysis, and recommendation systems Despite their effectiveness, Knowledge Graphs (KGs) have notable limitations. They often lose important contextual information, making it difficult to capture the complexity and richness of…

-

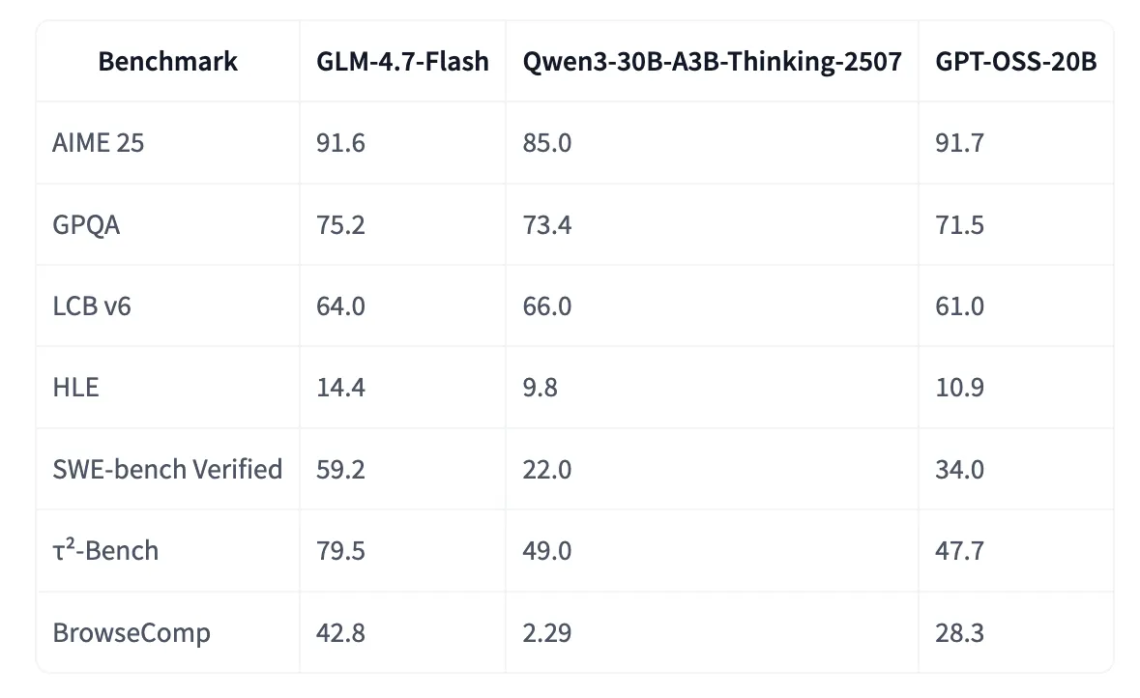

GLM-4.7-Flash is a new member of the GLM 4.7 family and targets developers who want strong coding and reasoning performance in a model that is practical to run locally. Zhipu AI (Z.ai) describes GLM-4.7-Flash as a 30B-A3B MoE model and presents it as the strongest model in the 30B class, designed for lightweight deployment where performance and efficiency both matter. Model class and position inside the GLM 4.7 family GLM-4.7-Flash is a text generation model with 31B params, BF16 and F32 tensor types, and the architecture tag glm4_moe_lite. It supports English and Chinese, and it is configured for conversational use.…

-

Microsoft Research has released OptiMind, an AI based system that converts natural language descriptions of complex decision problems into mathematical formulations that optimization solvers can execute. It targets a long standing bottleneck in operations research, where translating business intent into mixed integer linear programs usually needs expert modelers and days of work. What OptiMind Is And What It Outputs? OptiMind-SFT is a specialized 20B parameter Mixture of Experts model in the gpt oss transformer family. About 3.6B parameters are active per token, so inference cost is closer to a mid sized model while keeping high capacity. The context length is…