In this tutorial, we build an end-to-end streaming voice agent that mirrors how modern low-latency conversational systems operate in real time. We simulate the complete pipeline, from chunked audio input and streaming speech recognition to incremental language model reasoning and streamed text-to-speech output, while explicitly tracking latency at every stage. By working with strict latency budgets and observing metrics such as time to first token and time to first audio, we focus on the practical engineering trade-offs that shape responsive voice-based user experiences. Check out the FULL CODES here. import time import asyncio import numpy as np from collections import deque…

-

-

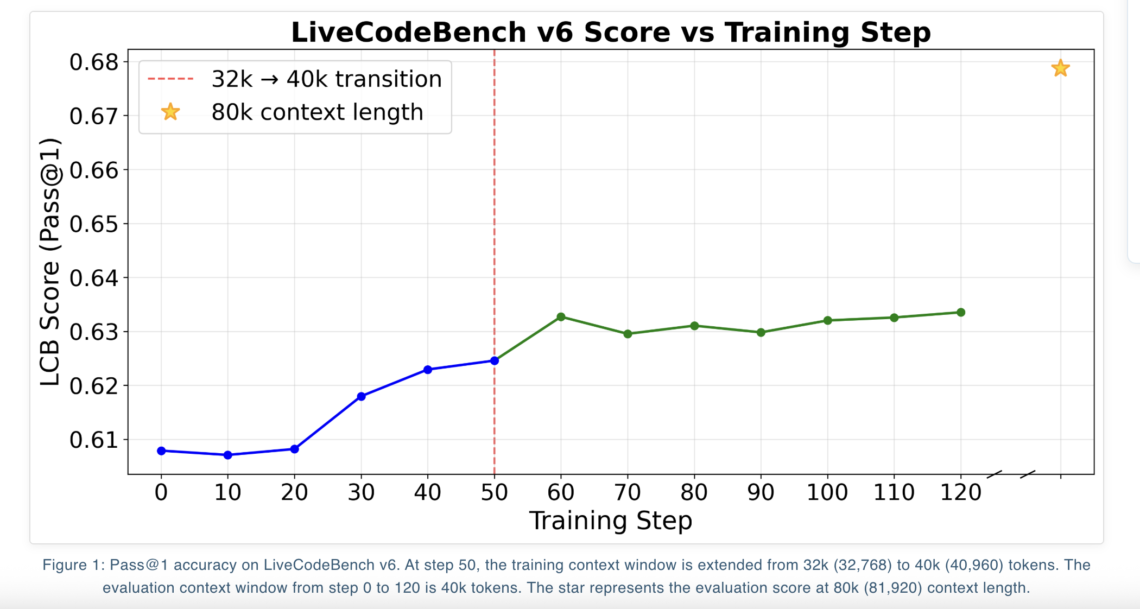

Nous Research has introduced NousCoder-14B, a competitive olympiad programming model that is post trained on Qwen3-14B using reinforcement learning (RL) with verifiable rewards. On the LiveCodeBench v6 benchmark, which covers problems from 08/01/2024 to 05/01/2025, the model reaches a Pass@1 accuracy of 67.87 percent. This is 7.08 percentage points higher than the Qwen3-14B baseline of 60.79 percent on the same benchmark. The research team trained the model on 24k verifiable coding problems using 48 B200 GPUs over 4 days, and released the weights under the Apache 2.0 license on Hugging Face. https://nousresearch.com/nouscoder-14b-a-competitive-olympiad-programming-model/ Benchmark focus and what Pass@1 means LiveCodeBench v6…

-

In this tutorial, we build a hands-on comparison between a synchronous RPC-based system and an asynchronous event-driven architecture to understand how real distributed systems behave under load and failure. We simulate downstream services with variable latency, overload conditions, and transient errors, and then drive both architectures using bursty traffic patterns. By observing metrics such as tail latency, retries, failures, and dead-letter queues, we examine how tight RPC coupling amplifies failures and how asynchronous event-driven designs trade immediate consistency for resilience. Throughout the tutorial, we focus on practical mechanisms, retries, exponential backoff, circuit breakers, bulkheads, and queues that engineers use to…

-

Vercel has released agent-skills, a collection of skills that turns best practice playbooks into reusable skills for AI coding agents. The project follows the Agent Skills specification and focuses first on React and Next.js performance, web design review, and claimable deployments on Vercel. Skills are installed with a command that feels similar to npm, and are then discovered by compatible agents during normal coding flows. Agent Skills format Agent Skills is an open format for packaging capabilities for AI agents. A skill is a folder that contains instructions and optional scripts. The format is designed so that different tools can…

-

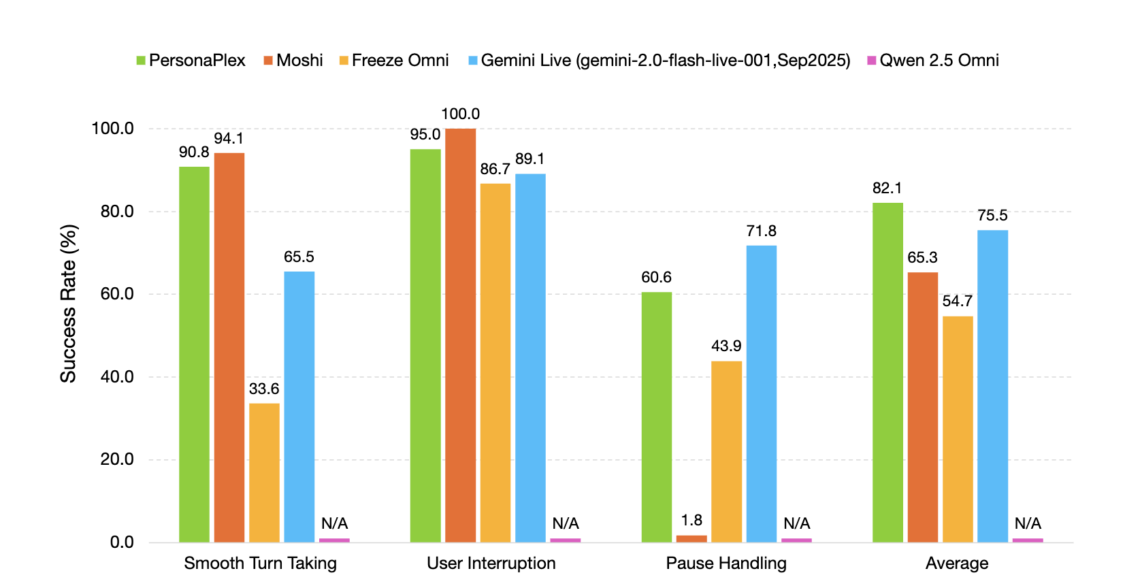

NVIDIA Researchers released PersonaPlex-7B-v1, a full duplex speech to speech conversational model that targets natural voice interactions with precise persona control. From ASR→LLM→TTS to a single full duplex model Conventional voice assistants usually run a cascade. Automatic Speech Recognition (ASR) converts speech to text, a language model generates a text answer, and Text to Speech (TTS) converts back to audio. Each stage adds latency, and the pipeline cannot handle overlapping speech, natural interruptions, or dense backchannels. PersonaPlex replaces this stack with a single Transformer model that performs streaming speech understanding and speech generation in one network. The model operates on…

-

In this tutorial, we build an advanced agentic AI workflow using LlamaIndex and OpenAI models. We focus on designing a reliable retrieval-augmented generation (RAG) agent that can reason over evidence, use tools deliberately, and evaluate its own outputs for quality. By structuring the system around retrieval, answer synthesis, and self-evaluation, we demonstrate how agentic patterns go beyond simple chatbots and move toward more trustworthy, controllable AI systems suitable for research and analytical use cases. !pip -q install -U llama-index llama-index-llms-openai llama-index-embeddings-openai nest_asyncio import os import asyncio import nest_asyncio nest_asyncio.apply() from getpass import getpass if not os.environ.get("OPENAI_API_KEY"): os.environ["OPENAI_API_KEY"] = getpass("Enter OPENAI_API_KEY:…

-

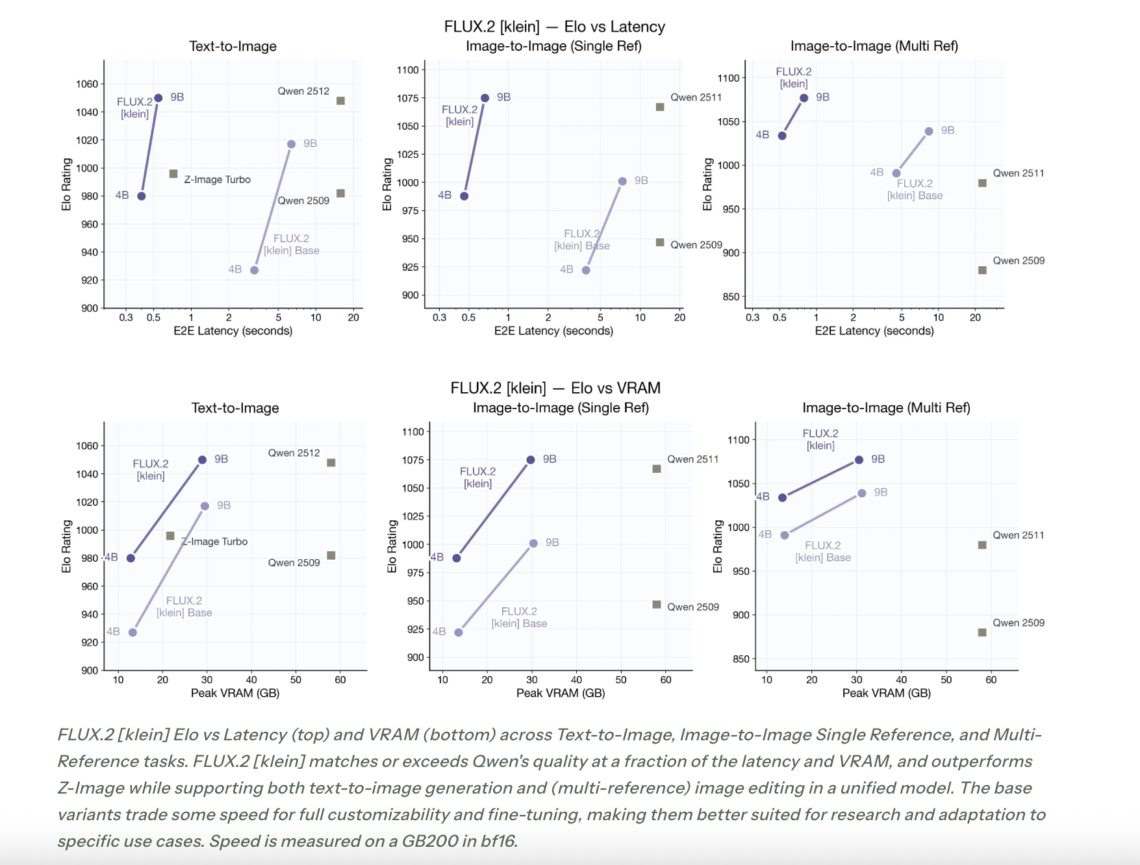

Black Forest Labs releases FLUX.2 [klein], a compact image model family that targets interactive visual intelligence on consumer hardware. FLUX.2 [klein] extends the FLUX.2 line with sub second generation and editing, a unified architecture for text to image and image to image, and deployment options that range from local GPUs to cloud APIs, while keeping state of the art image quality. From FLUX.2 [dev] to interactive visual intelligence FLUX.2 [dev] is a 32 billion parameter rectified flow transformer for text conditioned image generation and editing, including composition with multiple reference images, and runs mainly on data center class accelerators. It…

-

def _now_iso() -> str: return datetime.utcnow().replace(microsecond=0).isoformat() + "Z" def _stable_id(prefix: str, seed: str) -> str: h = hashlib.sha256(seed.encode("utf-8")).hexdigest()[:10] return f"{prefix}_{h}" class MockEHR: def __init__(self): self.orders_queue: List[SurgeryOrder] = [] self.patient_docs: Dict[str, List[ClinicalDocument]] = {} def seed_data(self, n_orders: int = 5): random.seed(7) def make_patient(i: int) -> Patient: pid = f"PT{i:04d}" plan = random.choice(list(InsurancePlan)) return Patient( patient_id=pid, name=f"Patient {i}", dob="1980-01-01", member_id=f"M{i:08d}", plan=plan, ) def docs_for_order(patient: Patient, surgery: SurgeryType) -> List[ClinicalDocument]: base = [ ClinicalDocument( doc_id=_stable_id("DOC", patient.patient_id + "H&P"), doc_type=DocType.H_AND_P, created_at=_now_iso(), content="H&P: Relevant history, exam findings, and surgical indication.", source="EHR", ), ClinicalDocument( doc_id=_stable_id("DOC", patient.patient_id + "NOTE"), doc_type=DocType.CLINICAL_NOTE, created_at=_now_iso(), content="Clinical note: Symptoms, conservative management attempted,…

-

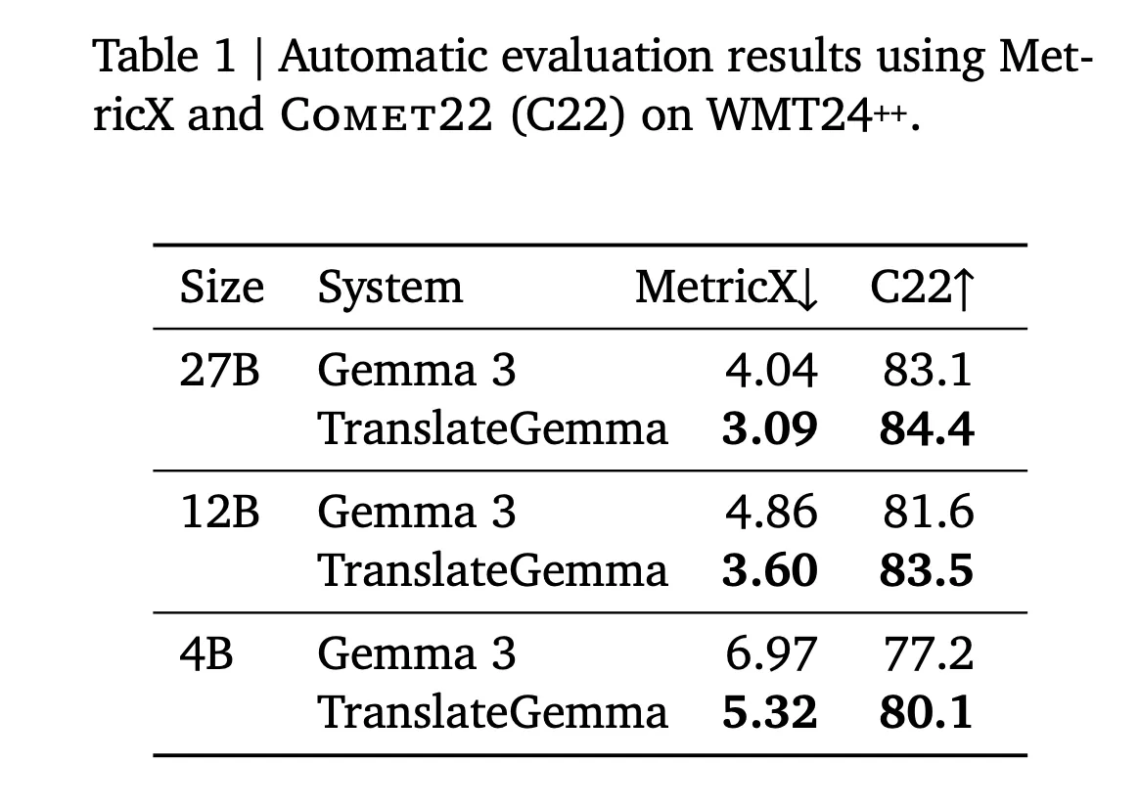

Google AI has released TranslateGemma, a suite of open machine translation models built on Gemma 3 and targeted at 55 languages. The family comes in 4B, 12B and 27B parameter sizes. It is designed to run across devices from mobile and edge hardware to laptops and a single H100 GPU or TPU instance in the cloud. TranslateGemma is not a separate architecture. It is Gemma 3 specialized for translation through a two stage post training pipeline. (1) supervised fine tuning on large parallel corpora. (2) Reinforcement learning that optimizes translation quality with a multi signal reward ensemble. The goal is…

-

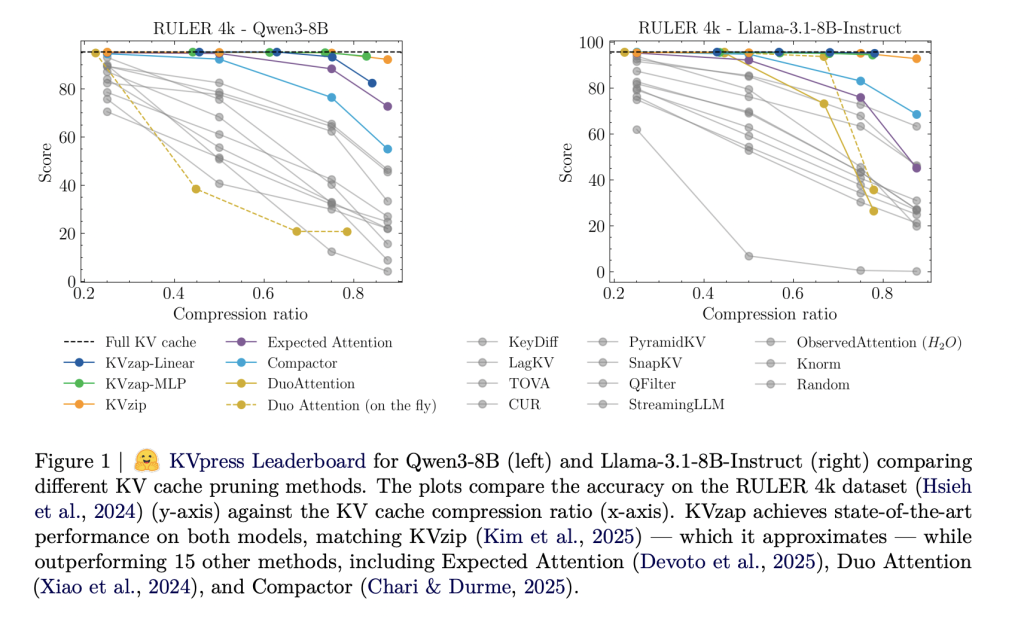

As context lengths move into tens and hundreds of thousands of tokens, the key value cache in transformer decoders becomes a primary deployment bottleneck. The cache stores keys and values for every layer and head with shape (2, L, H, T, D). For a vanilla transformer such as Llama1-65B, the cache reaches about 335 GB at 128k tokens in bfloat16, which directly limits batch size and increases time to first token. https://arxiv.org/pdf/2601.07891 Architectural compression leaves the sequence axis untouched Production models already compress the cache along several axes. Grouped Query Attention shares keys and values across multiple queries and yields…