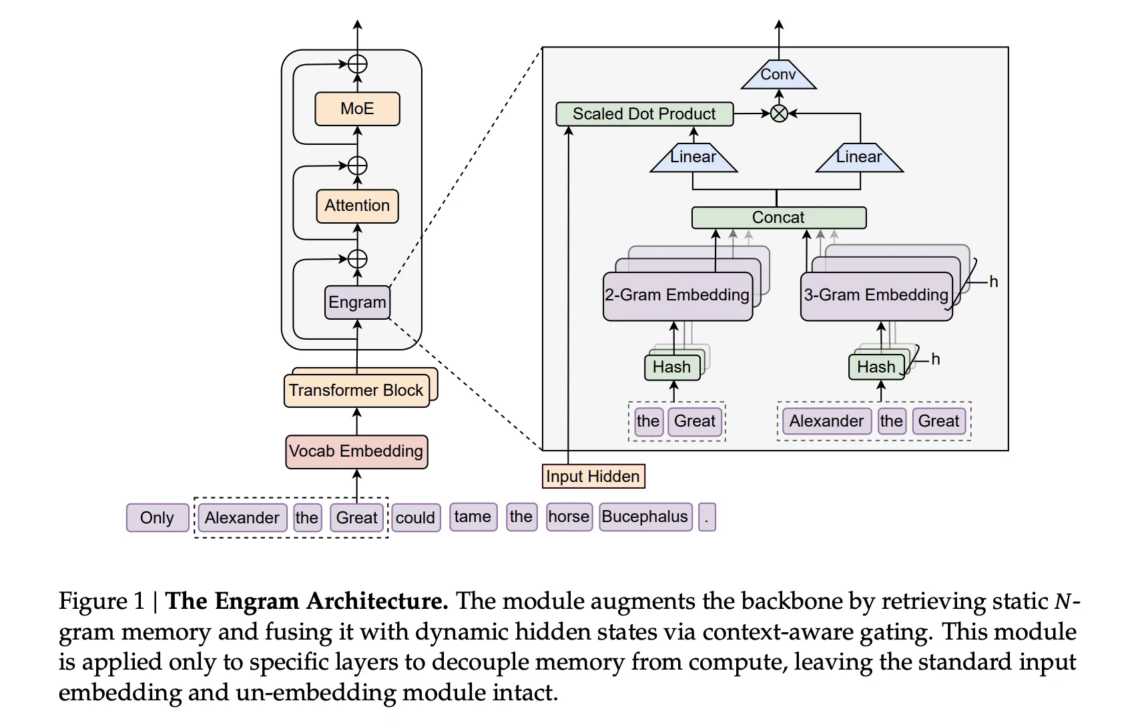

Transformers use attention and Mixture-of-Experts to scale computation, but they still lack a native way to perform knowledge lookup. They re-compute the same local patterns again and again, which wastes depth and FLOPs. DeepSeek’s new Engram module targets exactly this gap by adding a conditional memory axis that works alongside MoE rather than replacing it. At a high level, Engram modernizes classic N gram embeddings and turns them into a scalable, O(1) lookup memory that plugs directly into the Transformer backbone. The result is a parametric memory that stores static patterns such as common phrases and entities, while the backbone…

-

-

In this tutorial, we build a clean, advanced demonstration of modern MCP design by focusing on three core ideas: stateless communication, strict SDK-level validation, and asynchronous, long-running operations. We implement a minimal MCP-like protocol using structured envelopes, signed requests, and Pydantic-validated tools to show how agents and services can interact safely without relying on persistent sessions. Check out the FULL CODES here. import asyncio, time, json, uuid, hmac, hashlib from dataclasses import dataclass from typing import Any, Dict, Optional, Literal, List from pydantic import BaseModel, Field, ValidationError, ConfigDict def _now_ms(): return int(time.time() * 1000) def _uuid(): return str(uuid.uuid4()) def _canonical_json(obj): return…

-

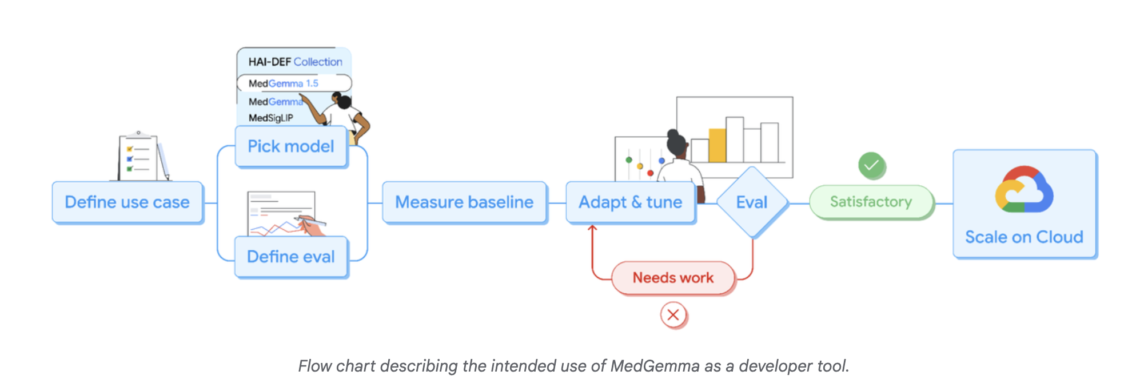

Google Research has expanded its Health AI Developer Foundations program (HAI-DEF) with the release of MedGemma-1.5. The model is released as open starting points for developers who want to build medical imaging, text and speech systems and then adapt them to local workflows and regulations. https://research.google/blog/next-generation-medical-image-interpretation-with-medgemma-15-and-medical-speech-to-text-with-medasr/ MedGemma 1.5, small multimodal model for real clinical data MedGemma is a family of medical generative models built on Gemma. The new release, MedGemma-1.5-4B, targets developers who need a compact model that can still handle real clinical data. The previous MedGemma-1-27B model remains available for more demanding text heavy use cases. MedGemma-1.5-4B is multimodal.…

-

In this tutorial, we build an advanced, multi-turn crescendo-style red-teaming harness using Garak to evaluate how large language models behave under gradual conversational pressure. We implement a custom iterative probe and a lightweight detector to simulate realistic escalation patterns in which benign prompts slowly pivot toward sensitive requests, and we assess whether the model maintains its safety boundaries across turns. Also, we focus on practical, reproducible evaluation of multi-turn robustness rather than single-prompt failures. Check out the FULL CODES here. import os, sys, subprocess, json, glob, re from pathlib import Path from datetime import datetime, timezone subprocess.run( [sys.executable, "-m", "pip", "install",…

-

Anthropic has released Cowork, a new feature that runs agentic workflows on local files for non coding tasks currently available in research preview inside the Claude macOS desktop app. What Cowork Does At The File System Level Cowork currently runs as a dedicated mode in the Claude desktop app. When you start a Cowork session, you choose a folder on your system. Claude can then read, edit, or create files only inside that folder. Anthropic gives concrete examples. Claude can reorganize a downloads folder by sorting and renaming files. It can read a directory of screenshots, extract amounts, and build…

-

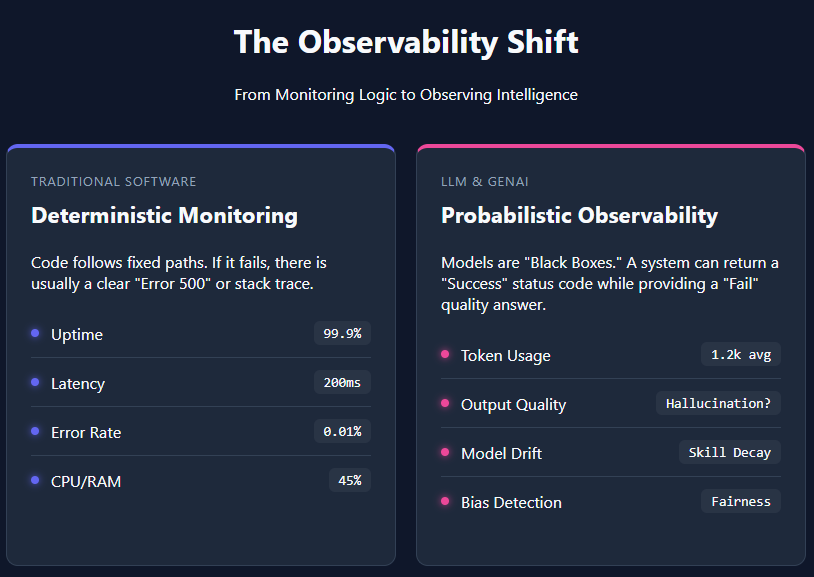

Artificial intelligence (AI) observability refers to the ability to understand, monitor, and evaluate AI systems by tracking their unique metrics—such as token usage, response quality, latency, and model drift. Unlike traditional software, large language models (LLMs) and other generative AI applications are probabilistic in nature. They do not follow fixed, transparent execution paths, which makes their decision-making difficult to trace and reason about. This “black box” behavior creates challenges for trust, especially in high-stakes or production-critical environments. AI systems are no longer experimental demos—they are production software. And like any production system, they need observability. Traditional software engineering has long…

-

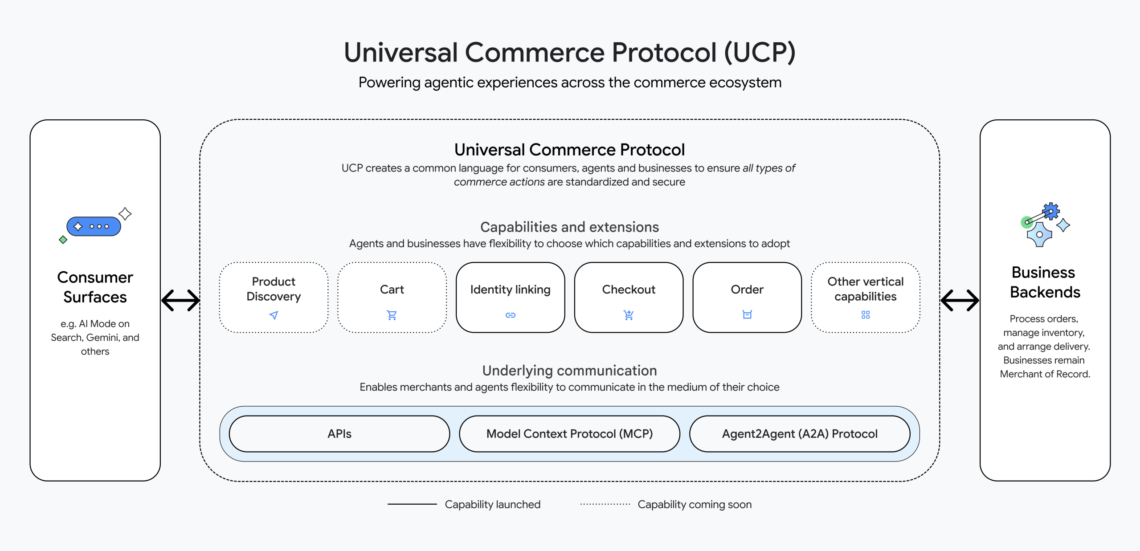

Can AI shopping agents move beyond sending product links and actually complete trusted purchases end to end inside a chat? Universal Commerce Protocol, or UCP, is Google’s new open standard for agentic commerce. It gives AI agents and merchant systems a shared language so that a shopping query can move from product discovery to an authenticated order without custom integrations for every retailer and every surface. https://developers.googleblog.com/under-the-hood-universal-commerce-protocol-ucp/ What problem is UCP solving? Today, most AI shopping experiences stop at recommendation. The agent aggregates links, you handle stock checks, coupon codes, and checkout flows on separate sites. Google’s engineering team describes…

-

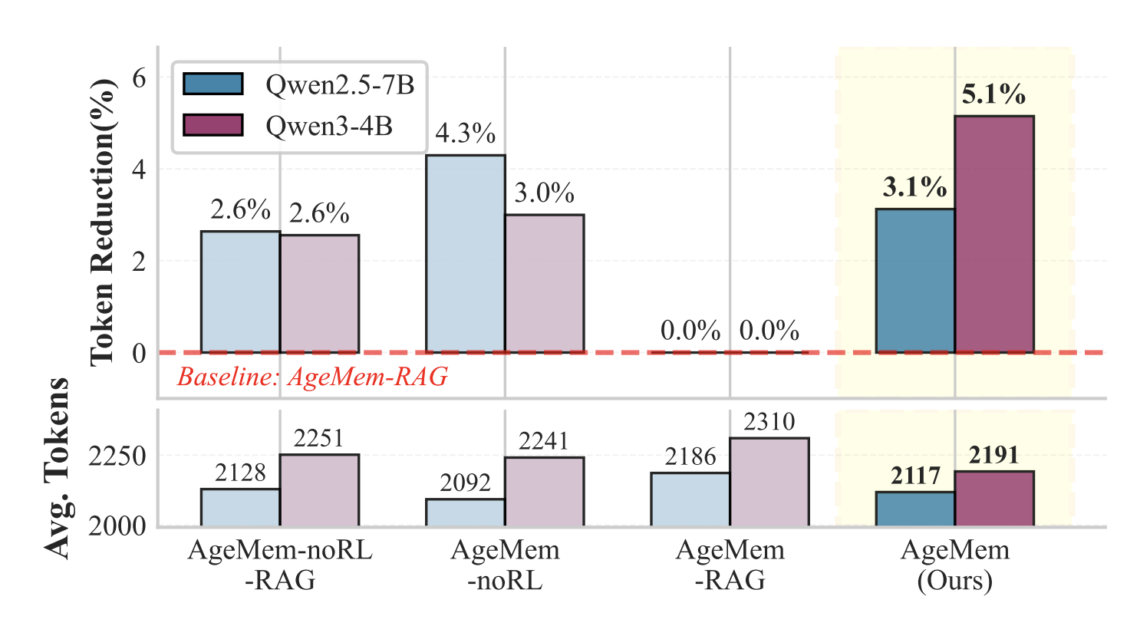

How do you design an LLM agent that decides for itself what to store in long term memory, what to keep in short term context and what to discard, without hand tuned heuristics or extra controllers? Can a single policy learn to manage both memory types through the same action space as text generation? Researchers from Alibaba Group and Wuhan University introduce Agentic Memory, or AgeMem, a framework that lets large language model agents learn how to manage both long term and short term memory as part of a single policy. Instead of relying on hand written rules or external…

-

What does an end to end stack for terminal agents look like when you combine structured toolkits, synthetic RL environments, and benchmark aligned evaluation? A team of researchers from CAMEL AI, Eigent AI and other collaborators have released SETA, a toolkit and environment stack that focuses on reinforcement learning for terminal agents. The project targets agents that operate inside a Unix style shell and must complete verifiable tasks under a benchmark harness such as Terminal Bench. Three main contributions: A state of the art terminal agent on Terminal Bench: They achieve state of the art performance with a Claude Sonnet…

-

In this tutorial, we demonstrate a realistic data poisoning attack by manipulating labels in the CIFAR-10 dataset and observing its impact on model behavior. We construct a clean and a poisoned training pipeline side by side, using a ResNet-style convolutional network to ensure stable, comparable learning dynamics. By selectively flipping a fraction of samples from a target class to a malicious class during training, we show how subtle corruption in the data pipeline can propagate into systematic misclassification at inference time. Check out the FULL CODES here. import torch import torch.nn as nn import torch.optim as optim import torchvision import torchvision.transforms…