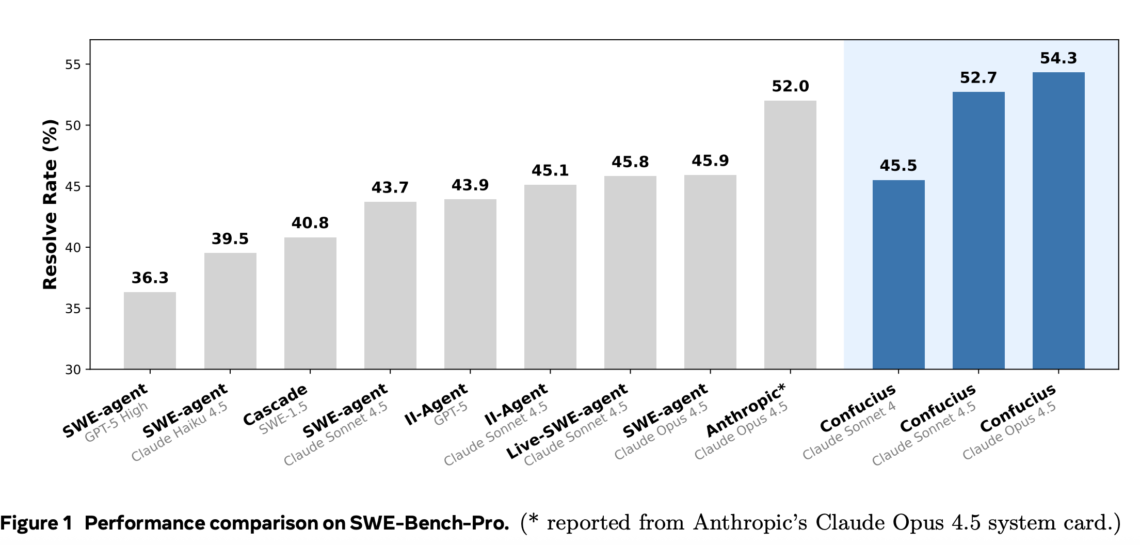

How far can a mid sized language model go if the real innovation moves from the backbone into the agent scaffold and tool stack? Meta and Harvard researchers have released the Confucius Code Agent, an open sourced AI software engineer built on the Confucius SDK that is designed for industrial scale software repositories and long running sessions. The system targets real GitHub projects, complex test toolchains at evaluation time, and reproducible results on benchmarks such as SWE Bench Pro and SWE Bench Verified, while exposing the full scaffold for developers. https://arxiv.org/pdf/2512.10398 Confucius SDK, scaffolding around the model The Confucius SDK…

-

-

In this tutorial, we demonstrate how we use Ibis to build a portable, in-database feature engineering pipeline that looks and feels like Pandas but executes entirely inside the database. We show how we connect to DuckDB, register data safely inside the backend, and define complex transformations using window functions and aggregations without ever pulling raw data into local memory. By keeping all transformations lazy and backend-agnostic, we demonstrate how to write analytics code once in Python and rely on Ibis to translate it into efficient SQL. Check out the FULL CODES here. !pip -q install "ibis-framework[duckdb,examples]" duckdb pyarrow pandas import ibis…

-

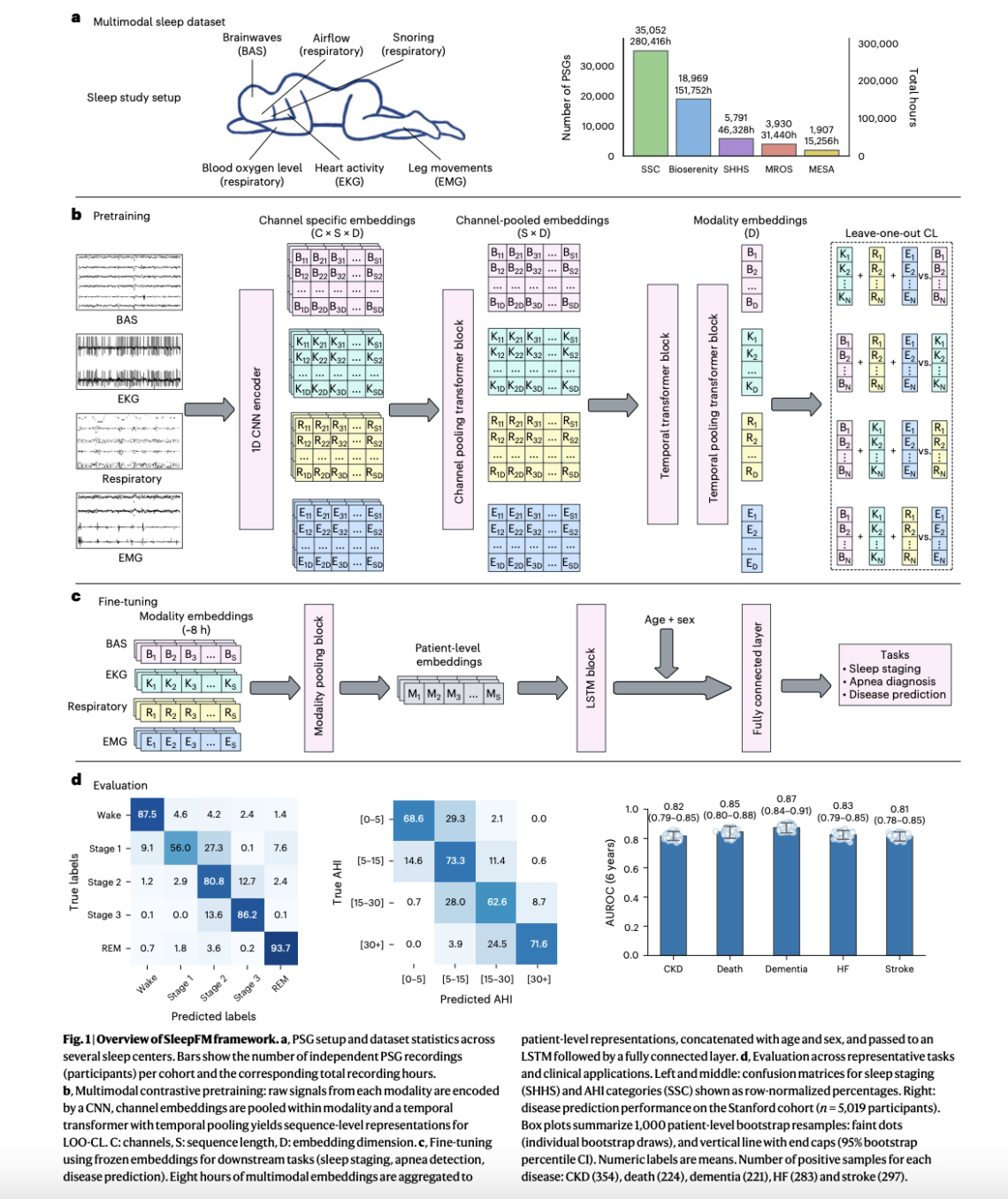

A team of Stanford Medicine researchers have introduced SleepFM Clinical, a multimodal sleep foundation model that learns from clinical polysomnography and predicts long term disease risk from a single night of sleep. The research work is published in Nature Medicine and the team has released the clinical code as the open source sleepfm-clinical repository on GitHub under the MIT license. From overnight polysomnography to a general representation Polysomnography records brain activity, eye movements, heart signals, muscle tone, breathing effort and oxygen saturation during a full night in a sleep lab. It is the gold standard test in sleep medicine, but…

-

In this tutorial, we demonstrate how to build a unified Apache Beam pipeline that works seamlessly in both batch and stream-like modes using the DirectRunner. We generate synthetic, event-time–aware data and apply fixed windowing with triggers and allowed lateness to demonstrate how Apache Beam consistently handles both on-time and late events. By switching only the input source, we keep the core aggregation logic identical, which helps us clearly understand how Beam’s event-time model, windows, and panes behave without relying on external streaming infrastructure. Check out the FULL CODES here. !pip -q install -U "grpcio>=1.71.2" "grpcio-status>=1.71.2" !pip -q install -U apache-beam crcmod…

-

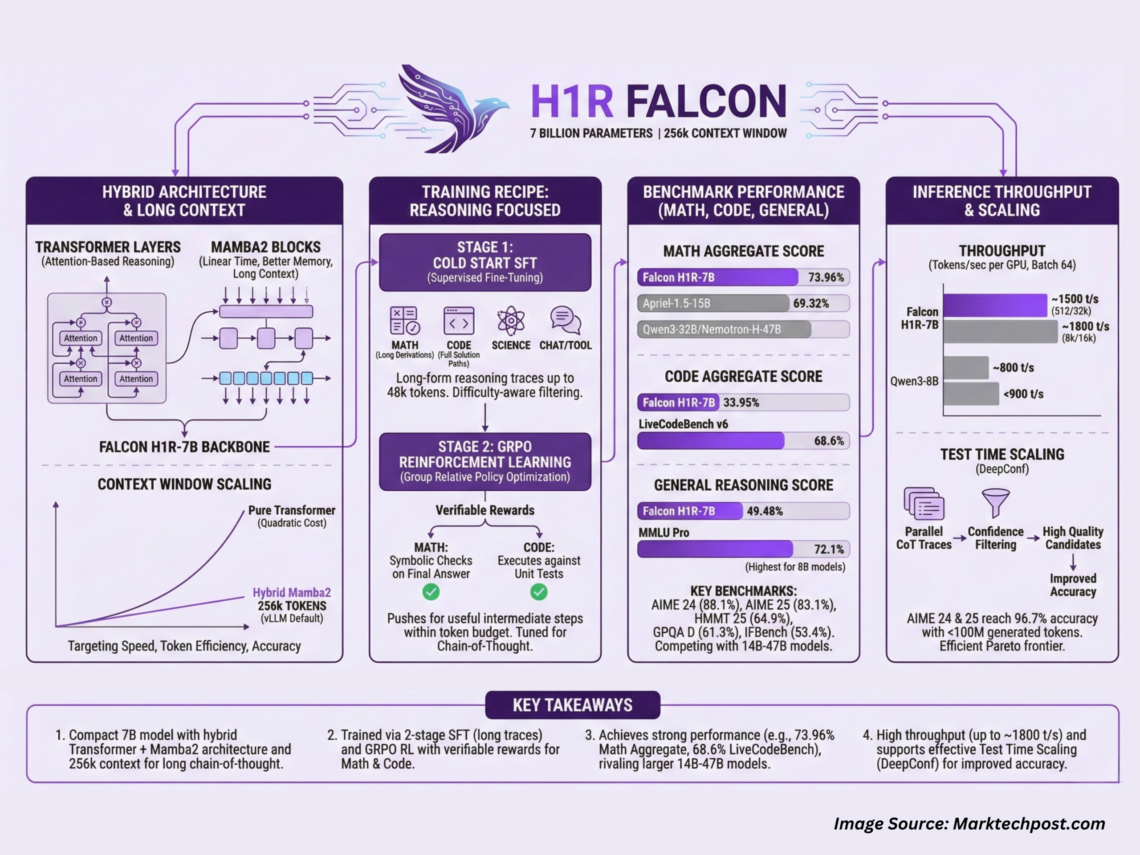

Technology Innovation Institute (TII), Abu Dhabi, has released Falcon-H1R-7B, a 7B parameter reasoning specialized model that matches or exceeds many 14B to 47B reasoning models in math, code and general benchmarks, while staying compact and efficient. It builds on Falcon H1 7B Base and is available on Hugging Face under the Falcon-H1R collection. Falcon-H1R-7B is interesting because it combines 3 design choices in 1 system, a hybrid Transformer along with Mamba2 backbone, a very long context that reaches 256k tokens in standard vLLM deployments, and a training recipe that mixes supervised long form reasoning with reinforcement learning using GRPO. Hybrid…

-

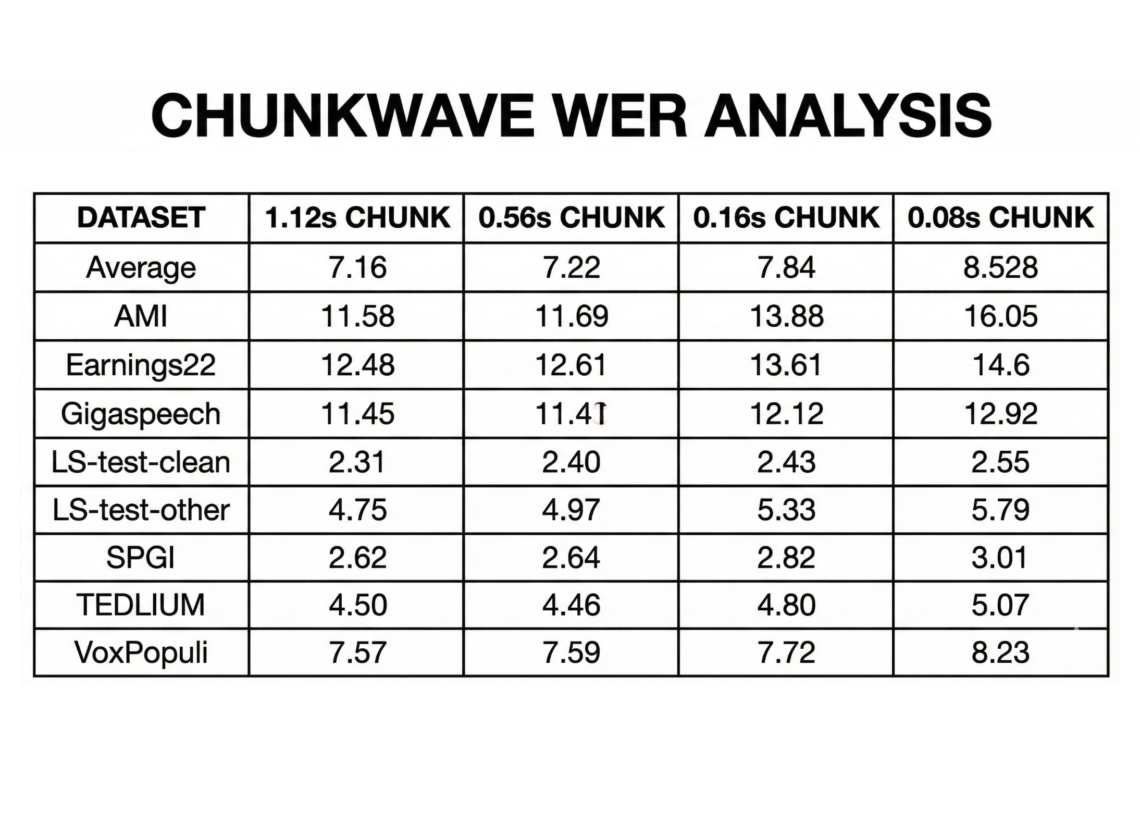

NVIDIA has just released its new streaming English transcription model (Nemotron Speech ASR) built specifically for low latency voice agents and live captioning. The checkpoint nvidia/nemotron-speech-streaming-en-0.6b on Hugging Face combines a cache aware FastConformer encoder with an RNNT decoder, and is tuned for both streaming and batch workloads on modern NVIDIA GPUs. Model design, architecture and input assumptions Nemotron Speech ASR (Automatic Speech Recognition) is a 600M parameter model based on a cache aware FastConformer encoder with 24 layers and an RNNT decoder. The encoder uses aggressive 8x convolutional downsampling to reduce the number of time steps, which directly lowers…

-

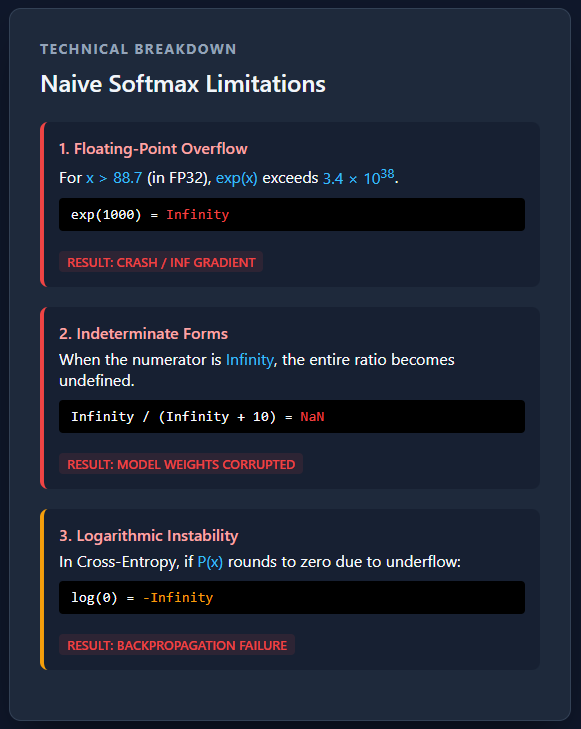

In deep learning, classification models don’t just need to make predictions—they need to express confidence. That’s where the Softmax activation function comes in. Softmax takes the raw, unbounded scores produced by a neural network and transforms them into a well-defined probability distribution, making it possible to interpret each output as the likelihood of a specific class. This property makes Softmax a cornerstone of multi-class classification tasks, from image recognition to language modeling. In this article, we’ll build an intuitive understanding of how Softmax works and why its implementation details matter more than they first appear. Check out the FULL CODES here.…

-

In this tutorial, we build a genuinely advanced Agentic AI system using LangGraph and OpenAI models by going beyond simple planner, executor loops. We implement adaptive deliberation, where the agent dynamically decides between fast and deep reasoning; a Zettelkasten-style agentic memory graph that stores atomic knowledge and automatically links related experiences; and a governed tool-use mechanism that enforces constraints during execution. By combining structured state management, memory-aware retrieval, reflexive learning, and controlled tool invocation, we demonstrate how modern agentic systems can reason, act, learn, and evolve rather than respond in a single pass. Check out the FULL CODES here. !pip -q…

-

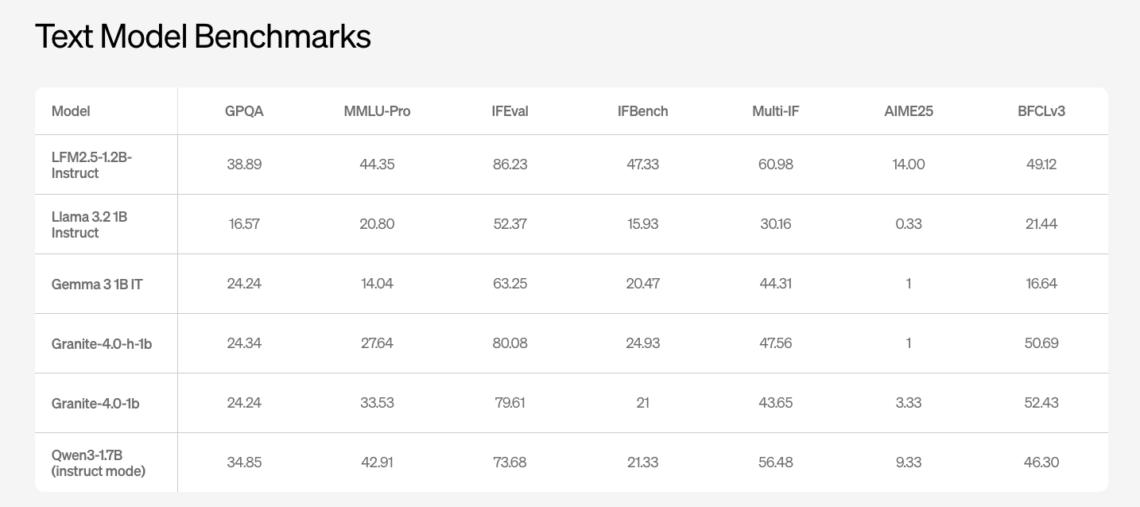

Liquid AI has introduced LFM2.5, a new generation of small foundation models built on the LFM2 architecture and focused at on device and edge deployments. The model family includes LFM2.5-1.2B-Base and LFM2.5-1.2B-Instruct and extends to Japanese, vision language, and audio language variants. It is released as open weights on Hugging Face and exposed through the LEAP platform. Architecture and training recipe LFM2.5 keeps the hybrid LFM2 architecture that was designed for fast and memory efficient inference on CPUs and NPUs and scales the data and post training pipeline. Pretraining for the 1.2 billion parameter backbone is extended from 10T to…

-

Marktechpost has released AI2025Dev, its 2025 analytics platform (available to AI Devs and Researchers without any signup or login) designed to convert the year’s AI activity into a queryable dataset spanning model releases, openness, training scale, benchmark performance, and ecosystem participants. Marktechpost is a California based AI news platform covering machine learning, deep learning, and data science research. What’s new in this release The 2025 release of AI2025Dev expands coverage across two layers: Release analytics, focusing on model and framework launches, license posture, vendor activity, and feature level segmentation. Ecosystem indexes, including curated “Top 100” collections that connect models to…