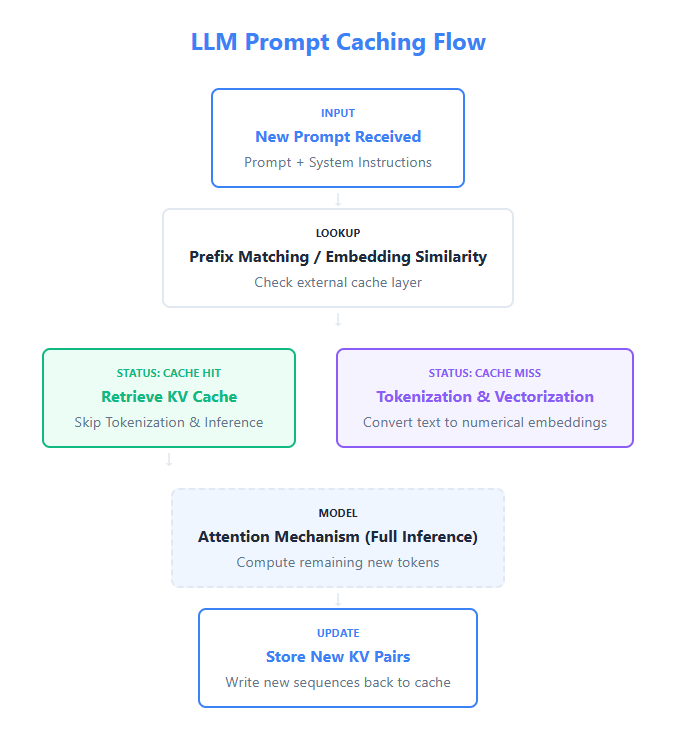

Question: Imagine your company’s LLM API costs suddenly doubled last month. A deeper analysis shows that while user inputs look different at a text level, many of them are semantically similar. As an engineer, how would you identify and reduce this redundancy without impacting response quality? What is Prompt Caching? Prompt caching is an optimization technique used in AI systems to improve speed and reduce cost. Instead of sending the same long instructions, documents, or examples to the model repeatedly, the system reuses previously processed prompt content such as static instructions, prompt prefixes, or shared context. This helps save both…

-

-

In this tutorial, we build an advanced multi-agent incident response system using AgentScope. We orchestrate multiple ReAct agents, each with a clearly defined role such as routing, triage, analysis, writing, and review, and connect them through structured routing and a shared message hub. By integrating OpenAI models, lightweight tool calling, and a simple internal runbook, we demonstrate how complex, real-world agentic workflows can be composed in pure Python without heavy infrastructure or brittle glue code. Check out the FULL CODES here. !pip -q install "agentscope>=0.1.5" pydantic nest_asyncio import os, json, re from getpass import getpass from typing import Literal from pydantic…

-

Zlab Princeton researchers have released LLM-Pruning Collection, a JAX based repository that consolidates major pruning algorithms for large language models into a single, reproducible framework. It targets one concrete goal, make it easy to compare block level, layer level and weight level pruning methods under a consistent training and evaluation stack on both GPUs and TPUs. What LLM-Pruning Collection Contains? It is described as a JAX based repo for LLM pruning. It is organized into three main directories: pruning holds implementations for several pruning methods: Minitron, ShortGPT, Wanda, SparseGPT, Magnitude, Sheared Llama and LLM-Pruner. training provides integration with FMS-FSDP for…

-

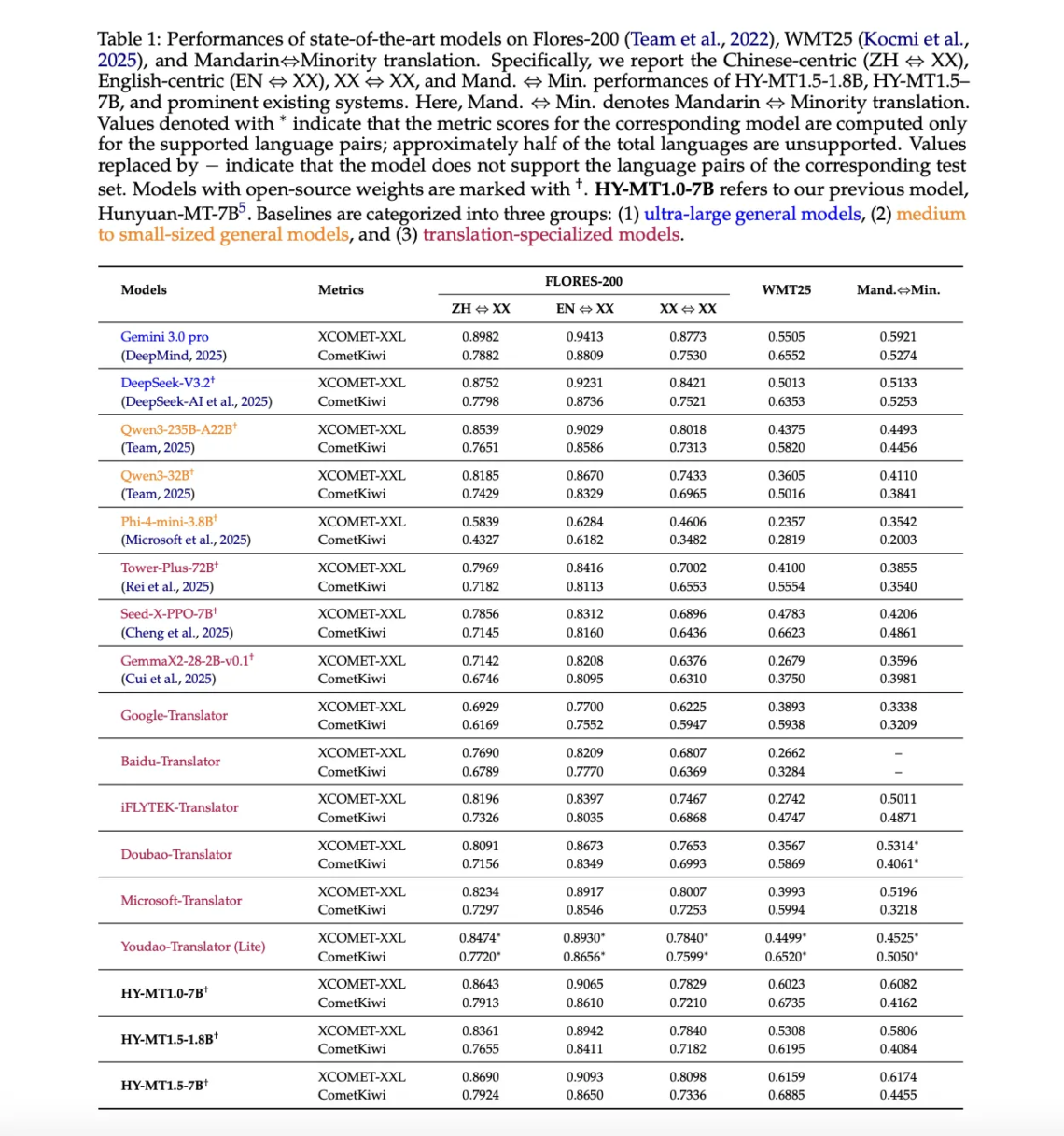

Tencent Hunyuan researchers have released HY-MT1.5, a multilingual machine translation family that targets both mobile devices and cloud systems with the same training recipe and metrics. HY-MT1.5 consists of 2 translation models, HY-MT1.5-1.8B and HY-MT1.5-7B, supports mutual translation across 33 languages with 5 ethnic and dialect variations, and is available on GitHub and Hugging Face under open weights. Model family and deployment targets HY-MT1.5-7B is an upgraded version of the WMT25 championship system Hunyuan-MT-7B. It is optimized for explanatory translation and mixed language scenarios, and adds native support for terminology intervention, contextual translation and formatted translation. HY-MT1.5-1.8B is the compact…

-

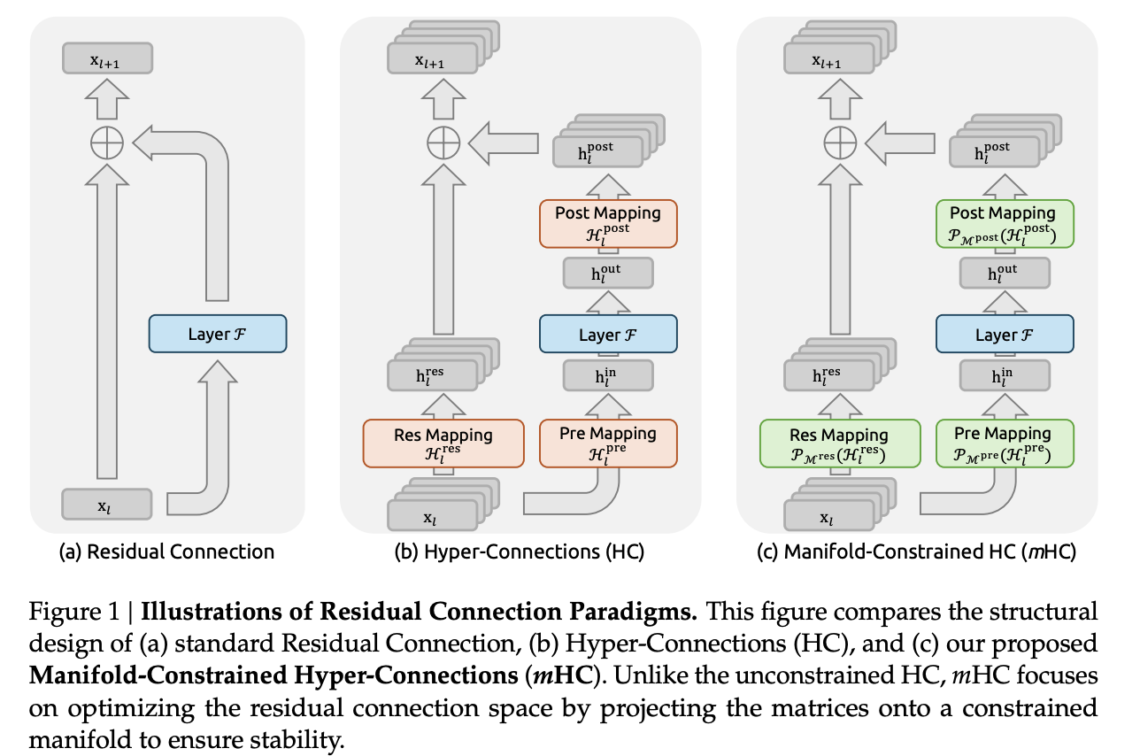

DeepSeek researchers are trying to solve a precise issue in large language model training. Residual connections made very deep networks trainable, hyper connections widened that residual stream, and training then became unstable at scale. The new method mHC, Manifold Constrained Hyper Connections, keeps the richer topology of hyper connections but locks the mixing behavior on a well defined manifold so that signals remain numerically stable in very deep stacks. https://www.arxiv.org/pdf/2512.24880 From Residual Connections To Hyper Connections Standard residual connections, as in ResNets and Transformers, propagate activations with xl+1=xl+F(xl,Wl)The identity path preserves magnitude and keeps gradients usable even when you stack…

-

In this tutorial, we build an advanced yet practical multi-agent system using OpenAI Swarm that runs in Colab. We demonstrate how we can orchestrate specialized agents, such as a triage agent, an SRE agent, a communications agent, and a critic, to collaboratively handle a real-world production incident scenario. By structuring agent handoffs, integrating lightweight tools for knowledge retrieval and decision ranking, and keeping the implementation clean and modular, we show how Swarm enables us to design controllable, agentic workflows without heavy frameworks or complex infrastructure. Check out the FULL CODES HERE. !pip -q install -U openai !pip -q install -U "git+https://github.com/openai/swarm.git"…

-

In this tutorial, we build an advanced red-team evaluation harness using Strands Agents to stress-test a tool-using AI system against prompt-injection and tool-misuse attacks. We treat agent safety as a first-class engineering problem by orchestrating multiple agents that generate adversarial prompts, execute them against a guarded target agent, and judge the responses with structured evaluation criteria. By running everything in Colab workflow and using an OpenAI model via Strands, we demonstrate how agentic systems can be used to evaluate, supervise, and harden other agents in a realistic, measurable way. Check out the FULL CODES here. !pip -q install "strands-agents[openai]" strands-agents-tools pydantic…

-

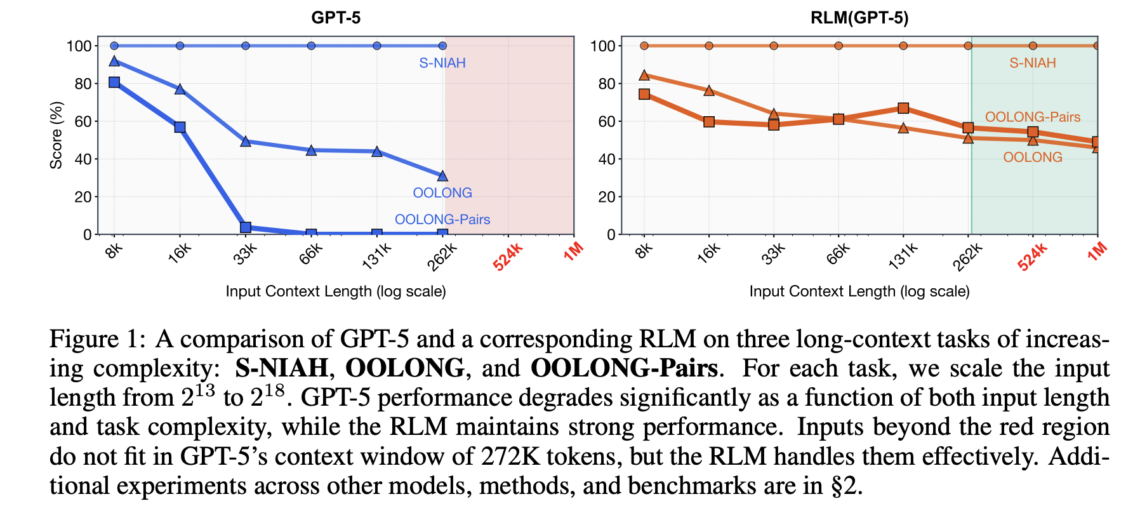

Recursive Language Models aim to break the usual trade off between context length, accuracy and cost in large language models. Instead of forcing a model to read a giant prompt in one pass, RLMs treat the prompt as an external environment and let the model decide how to inspect it with code, then recursively call itself on smaller pieces. https://arxiv.org/pdf/2512.24601 The Basics The full input is loaded into a Python REPL as a single string variable. The root model, for example GPT-5, never sees that string directly in its context. Instead, it receives a system prompt that explains how to…

-

Cloudflare has open sourced tokio-quiche, an asynchronous QUIC and HTTP/3 Rust library that wraps its battle tested quiche implementation with the Tokio runtime. The library has been refined inside production systems such as Apple iCloud Private Relay, next generation Oxy based proxies and WARP’s MASQUE client, where it handles millions of HTTP/3 requests per second with low latency and high throughput. tokio-quiche targets Rust teams that want QUIC and HTTP/3 without writing their own UDP and event loop integration code. From quiche to tokio-quiche quiche is Cloudflare’s open source QUIC and HTTP/3 implementation written in Rust and designed as a…

-

In this tutorial, we implement an agentic AI pattern using LangGraph that treats reasoning and action as a transactional workflow rather than a single-shot decision. We model a two-phase commit system in which an agent stages reversible changes, validates strict invariants, pauses for human approval via graph interrupts, and commits or rolls back only then. With this, we demonstrate how agentic systems can be designed with safety, auditability, and controllability at their core, moving beyond reactive chat agents toward structured, governance-aware AI workflows that run reliably in Google Colab using OpenAI models. Check out the Full Codes here. !pip -q install…