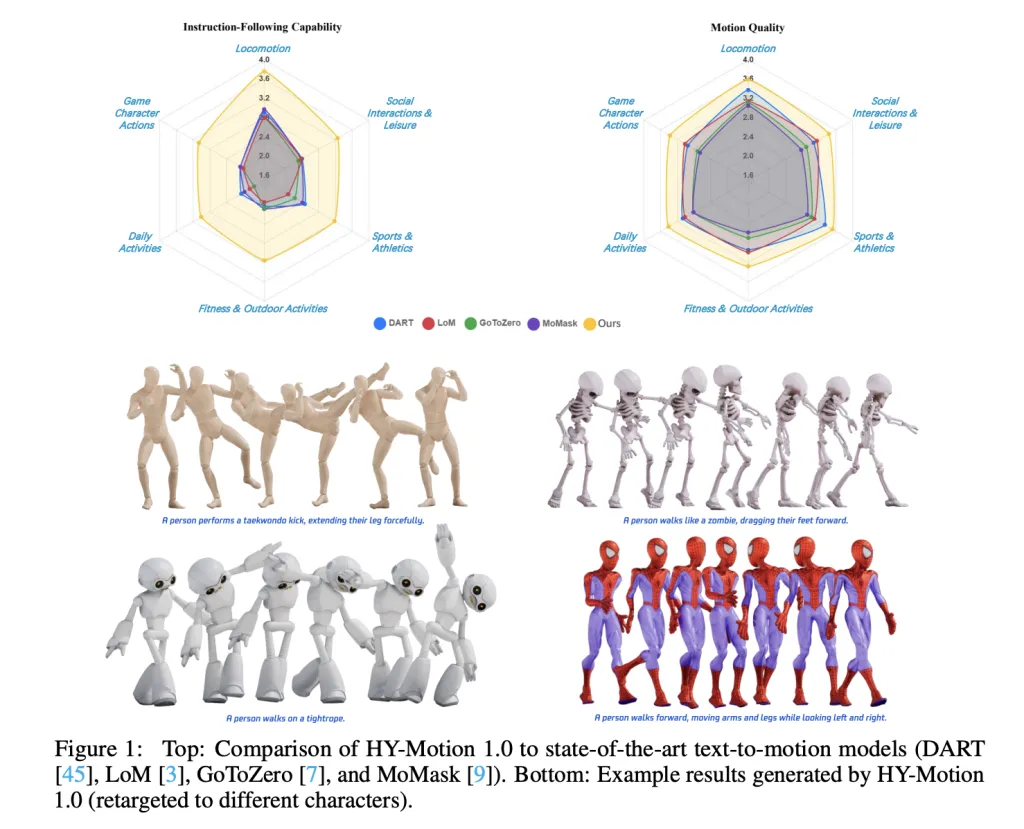

Tencent Hunyuan’s 3D Digital Human team has released HY-Motion 1.0, an open weight text-to-3D human motion generation family that scales Diffusion Transformer based Flow Matching to 1B parameters in the motion domain. The models turn natural language prompts plus an expected duration into 3D human motion clips on a unified SMPL-H skeleton and are available on GitHub and Hugging Face with code, checkpoints and a Gradio interface for local use. https://arxiv.org/pdf/2512.23464 What HY-Motion 1.0 provides for developers? HY-Motion 1.0 is a series of text-to-3D human motion generation models built on a Diffusion Transformer, DiT, trained with a Flow Matching objective.…

-

-

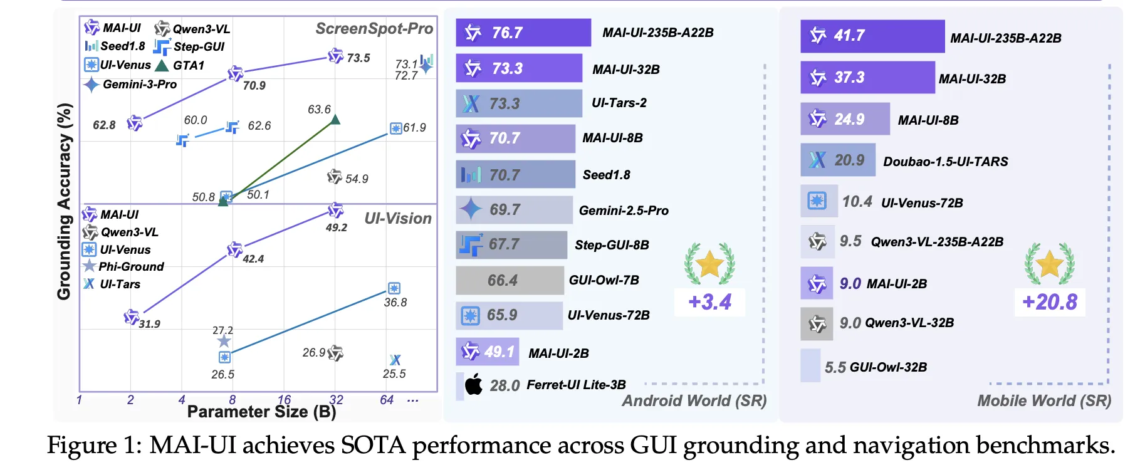

Alibaba Tongyi Lab have released MAI-UI—a family of foundation GUI agents. It natively integrates MCP tool use, agent user interaction, device–cloud collaboration, and online RL, establishing state-of-the-art results in general GUI grounding and mobile GUI navigation, surpassing Gemini-2.5-Pro, Seed1.8, and UI-Tars-2 on AndroidWorld. The system targets three specific gaps that early GUI agents often ignore, native agent user interaction, MCP tool integration, and a device cloud collaboration architecture that keeps privacy sensitive work on device while still using large cloud models when needed. https://arxiv.org/pdf/2512.22047 What is MAI-UI? MAI-UI is a family of multimodal GUI agents built on Qwen3 VL, with…

-

In this tutorial, we demonstrate how we simulate a privacy-preserving fraud detection system using Federated Learning without relying on heavyweight frameworks or complex infrastructure. We build a clean, CPU-friendly setup that mimics ten independent banks, each training a local fraud-detection model on its own highly imbalanced transaction data. We coordinate these local updates through a simple FedAvg aggregation loop, allowing us to improve a global model while ensuring that no raw transaction data ever leaves a client. Alongside this, we integrate OpenAI to support post-training analysis and risk-oriented reporting, demonstrating how federated learning outputs can be translated into decision-ready insights.…

-

LLMRouter is an open source routing library from the U Lab at the University of Illinois Urbana Champaign that treats model selection as a first class system problem. It sits between applications and a pool of LLMs and chooses a model for each query based on task complexity, quality targets, and cost, all exposed through a unified Python API and CLI. The project ships with more than 16 routing models, a data generation pipeline over 11 benchmarks, and a plugin system for custom routers. Router families and supported models LLMRouter organizes routing algorithms into four families, Single-Round Routers, Multi-Round Routers,…

-

In this tutorial, we build an advanced, end-to-end multi-agent research workflow using the CAMEL framework. We design a coordinated society of agents, Planner, Researcher, Writer, Critic, and Finalizer, that collaboratively transform a high-level topic into a polished, evidence-grounded research brief. We securely integrate the OpenAI API, orchestrate agent interactions programmatically, and add lightweight persistent memory to retain knowledge across runs. By structuring the system around clear roles, JSON-based contracts, and iterative refinement, we demonstrate how CAMEL can be used to construct reliable, controllable, and scalable agentic pipelines. Check out the FULL CODES here. !pip -q install "camel-ai[all]" "python-dotenv" "rich" import os…

-

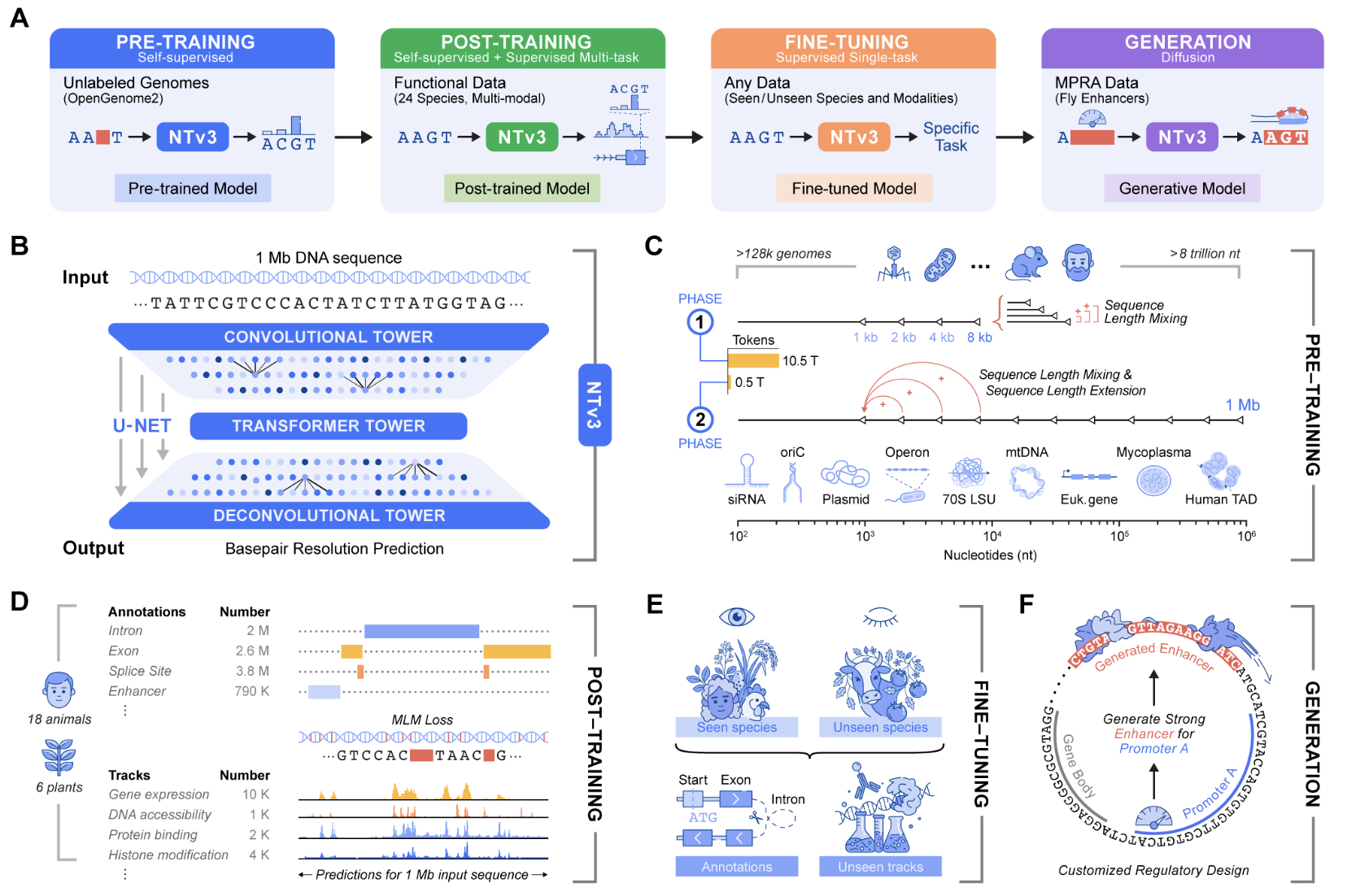

Genomic prediction and design now require models that connect local motifs with megabase scale regulatory context and that operate across many organisms. Nucleotide Transformer v3, or NTv3, is InstaDeep’s new multi species genomics foundation model for this setting. It unifies representation learning, functional track and genome annotation prediction, and controllable sequence generation in a single backbone that runs on 1 Mb contexts at single nucleotide resolution. Earlier Nucleotide Transformer models already showed that self supervised pretraining on thousands of genomes yields strong features for molecular phenotype prediction. The original series included models from 50M to 2.5B parameters trained on 3,200…

-

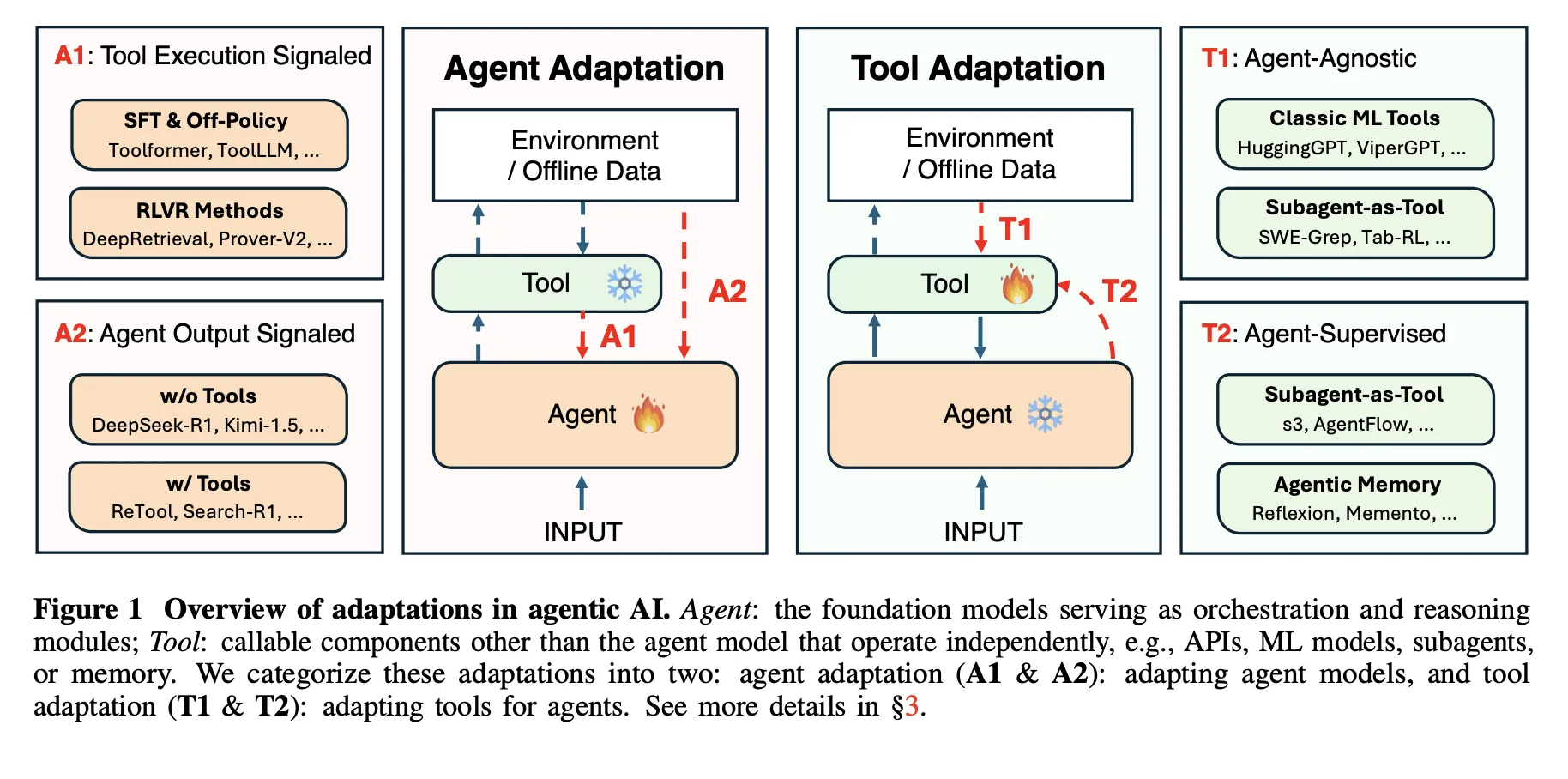

Agentic AI systems sit on top of large language models and connect to tools, memory, and external environments. They already support scientific discovery, software development, and clinical research, yet they still struggle with unreliable tool use, weak long horizon planning, and poor generalization. The latest research paper ‘Adaptation of Agentic AI‘ from Stanford, Harvard, UC Berkeley, Caltech proposes a unified view of how these systems should adapt and maps existing methods into a compact, mathematically defined framework. How this research paper models an agentic AI system? The research survey models an agentic AI system as a foundation model agent along…

-

In this tutorial, we build an advanced, fully autonomous logistics simulation in which multiple smart delivery trucks operate within a dynamic city-wide road network. We design the system so that each truck behaves as an agent capable of bidding on delivery orders, planning optimal routes, managing battery levels, seeking charging stations, and maximizing profit through self-interested decision-making. Through each code snippet, we explore how agentic behaviors emerge from simple rules, how competition shapes order allocation, and how a graph-based world enables realistic movement, routing, and resource constraints. Check out the FULL CODES here. import networkx as nx import matplotlib.pyplot as plt…

-

Just months after releasing M2—a fast, low-cost model designed for agents and code—MiniMax has introduced an enhanced version: MiniMax M2.1. M2 already stood out for its efficiency, running at roughly 8% of the cost of Claude Sonnet while delivering significantly higher speed. More importantly, it introduced a different computational and reasoning pattern, particularly in how the model structures and executes its thinking during complex code and tool-driven workflows. M2.1 builds on this foundation, bringing tangible improvements across key areas: better code quality, smarter instruction following, cleaner reasoning, and stronger performance across multiple programming languages. These upgrades extend the original strengths…

-

In this tutorial, we dive into the cutting edge of Agentic AI by building a “Zettelkasten” memory system, a “living” architecture that organizes information much like the human brain. We move beyond standard retrieval methods to construct a dynamic knowledge graph where an agent autonomously decomposes inputs into atomic facts, links them semantically, and even “sleeps” to consolidate memories into higher-order insights. Using Google’s Gemini, we implement a robust solution that addresses real-world API constraints, ensuring our agent stores data and also actively understands the evolving context of our projects. Check out the FULL CODES here. !pip install -q -U google-generativeai…